Chapter I: Counting the Nines — Where Quantum Actually Stands

1.1 The Hardware Landscape: An Honest Taxonomy

Quantum computing is not one technology. It is at least six competing technologies, each encoding quantum information in fundamentally different physical systems. Unlike artificial intelligence, where the Transformer architecture emerged as the dominant paradigm by the late 2010s, quantum computing has not converged on a single winner. This is both a risk (fragmentation of R&D investment, incompatible software ecosystems) and an opportunity (diversified bets, potential for surprise breakthroughs from unexpected directions).

Imagine several groups all trying to build the first airplane, but one uses wood-and-fabric biplanes, another attempts jet engines, a third builds helicopters. They're all "flying machines," but the engineering is totally different. Nobody knows which approach will win, and more than one may succeed for different purposes. The six primary qubit modalities, as of early 2026, are:

- Superconducting circuits (Google, IBM, Rigetti)

- Trapped ions (Quantinuum, IonQ)

- Neutral atoms (QuEra, Pasqal, Atom Computing)

- Photonic systems (PsiQuantum, Xanadu)

- Topological qubits (Microsoft)

- Silicon spin qubits (Intel, Diraq, Silicon Quantum Computing)

Each occupies a different position on the axes that matter: gate fidelity, coherence time, qubit count, connectivity, and scalability. No modality leads on all axes simultaneously.

Superconducting Qubits

Superconducting qubits are the most mature modality and the one with the largest installed base. Google’s Willow chip (105 qubits, December 2024) and IBM’s Nighthawk processor (120 qubits, November 2025) represent the current state of the art. Their key advantage is speed: gate times of tens to hundreds of nanoseconds, orders of magnitude faster than trapped ions. They are also fabricated using processes adapted from semiconductor manufacturing, which in principle allows them to leverage decades of industrial fab expertise.

Superconducting qubits are tiny circuits cooled to nearly absolute zero. Their biggest strength: they're blindingly fast. Their biggest weakness: they must be kept colder than deep space, and each qubit can only talk to its nearest neighbors—like students who can only pass notes to the person sitting next to them.

Google Willow’s headline numbers: 105 qubits, average T1 (relaxation time) of approximately 68 microseconds, and the first below-threshold surface code error correction[3], with a distance-7 code achieving 0.143% error per cycle. IBM’s third-revision Heron chips have achieved two-qubit gate fidelities above 99.9% for more than half of tested pairs, and Nighthawk’s 120-qubit square lattice[4] with 218 tunable couplers enables circuits of up to 5,000 two-qubit gates.

The binding limitation of superconducting qubits is twofold: they require millikelvin operating temperatures (dilution refrigerators), and they have limited connectivity (typically nearest-neighbor on a 2D grid). This limited connectivity makes error correction expensive—the standard surface code requires roughly 1,000 physical qubits per logical qubit at current fidelities. IBM is actively working to address this with “c-couplers” that enable longer-range qubit connections, demonstrated on the experimental Loon processor in November 2025.

The core problem: you currently need a huge number of physical qubits just to make a single reliable one. Scaling up to solve commercially interesting problems would require millions of hardware qubits—far beyond anything built today. Reducing that ratio is the central engineering challenge. A critical materials-science breakthrough emerged from Princeton in November 2025: a transmon qubit achieved a coherence time exceeding one millisecond[1], three times the previous record and fifteen times the industry standard. This was accomplished through improved fabrication techniques, specifically the use of tantalum rather than traditional niobium. If this result can be replicated at scale in commercial processors—a significant engineering challenge—it could substantially improve the error correction outlook for superconducting architectures.

Trapped Ions

Trapped-ion quantum computers, led by Quantinuum (majority-owned by Honeywell) and IonQ, use individual atomic ions suspended in electromagnetic traps as qubits. Their defining advantage is quality: Quantinuum’s Helios system achieves 99.921% two-qubit gate fidelity and 99.9975% single-qubit gate fidelity— the highest of any commercial system. Ions also provide all-to-all connectivity (any qubit can interact with any other qubit), which dramatically reduces the overhead required for error correction.

Instead of tiny circuits, this approach uses actual atoms floating in a vacuum, manipulated by lasers. The key advantage: any atom can "talk" to any other, not just its neighbors—like a classroom where every student can whisper to everyone, instead of passing notes through a chain. This full connectivity makes error correction far more efficient.

Helios represents a genuine architectural leap over its predecessor, the H2 system. Where H2 used a linear “racetrack” design with 56 qubits, Helios introduces a ring-storage architecture[2] with a first-of-its-kind commercial ion junction, enabling parallel sorting, cooling, and gating operations. The system produced 94 error-detected logical qubits globally entangled in one of the largest Greenberger-Horne-Zeilinger states ever recorded, using the [[4,2,2]] Iceberg code—a distance-2 code that detects errors via post-selection (discarding faulty results) rather than actively correcting them.

Through code concatenation with symplectic double codes, the system also demonstrated 48 logical qubits at the landmark 2:1 encoding ratio. This is a significant efficiency achievement, but it is important to note that post-selection-based error detection is a less powerful capability than the active error correction (requiring distance-3+ codes) needed for fault-tolerant computation at scale.

Helios crosses from detection to correction (March 2026): In a follow-on result published March 10, 2026, Quantinuum researchers demonstrated active quantum error correction on dozens of logical qubits in Helios—not just error detection via post-selection. Logical two-qubit gate errors were driven to approximately 1×10-4, meaningfully below Helios's raw physical two-qubit error rate, and a 94-qubit globally-entangled Greenberger-Horne-Zeilinger state was prepared at 94.9% fidelity[15]. This is the first commercial system to cross the logical-below-physical threshold on a meaningful number of logical qubits, and it is the single most important data point to land since this report's February 2026 cutoff.

The trapped-ion approach's primary limitation is speed: gate times are in the microsecond range, roughly 100 to 1,000 times slower than superconducting gates. Scaling is also an open question—Quantinuum’s QCCD (Quantum Charged Coupled Device) architecture plans to scale via junctions arranged in a “city street grid,” but this has not yet been demonstrated beyond Helios’s single junction. The company’s roadmap calls for Sol (192 physical qubits) by 2027 and Apollo (thousands of physical qubits, hundreds of logical qubits, full fault tolerance) by 2029.

IonQ, meanwhile, has pursued an aggressive acquisition strategy in 2025, purchasing Oxford Ionics, ID Quantique, Lightsynq, Capella Space, and Vector Atomic in deals exceeding one billion dollars, positioning itself as a vertically integrated quantum company. Its Forte Enterprise system operates with 36 qubits, and in March 2025, a collaboration with Ansys reported a 12% speedup[5] over classical high-performance computing on a single medical device simulation instance. This result has not been independently replicated, the quality of the classical baseline has not been independently verified, and a 12% margin on a single problem instance does not yet constitute "practical quantum advantage" by rigorous standards—particularly given that the quantum computation likely cost orders of magnitude more per compute hour than the classical alternative.

IonQ networks two trapped-ion QPUs (April 14, 2026): On World Quantum Day, IonQ demonstrated the first commercial photonic interconnect between two trapped-ion quantum processors—generating, transmitting, and detecting photons to entangle qubits on separate systems. The milestone is AFRL-funded and directly attacks the modular-scaling constraint this report identifies as the binding wall past ~1,000 physical qubits. IonQ's 99.99% two-qubit gate fidelity is a separate 2025 intra-QPU result, not a networked-link figure[16]. On the same day, IonQ was selected for DARPA's Heterogeneous Architectures for Quantum (HARQ) program—announced April 14, 2026 and complementary to QBI—to build quantum memories using nitrogen-vacancy centers in synthetic diamond[17]. On April 22, IonQ followed with a detailed fault-tolerant architecture paper targeting 10,000+ physical qubits; that is useful specificity for judging its roadmap, but it remains an engineering plan rather than an independently validated milestone[27].

A quantum computer reportedly edged out a traditional supercomputer on a real engineering problem—modest and unverified, but one of the first times anyone could even make that claim. Think of it as a prototype car completing one lap slightly faster than the reigning champion: noteworthy, but far from winning the race.

Neutral Atoms

Neutral-atom quantum computers, pioneered by QuEra, Pasqal, and Atom Computing, trap individual atoms (typically rubidium or ytterbium) in arrays of focused laser beams called optical tweezers. Their key advantage is natural scalability: it is relatively straightforward to create large arrays of hundreds or thousands of atomic sites. Atom Computing has demonstrated 1,180 atomic sites[6], and QuEra has operated with 256 qubits.

Imagine using tiny laser "tweezers" to pick up individual atoms and arrange them like pieces on a chessboard, rearranging patterns during computation. This approach is younger than the others, but it's scaling fast.

The architecture offers reconfigurable geometry—atoms can be rearranged in arbitrary patterns during computation—which enables native support for certain error correction codes and algorithms that would require costly SWAP operations on fixed-grid architectures. Ground-state coherence times are on the order of seconds, vastly exceeding superconducting systems.

The limitation is gate maturity: two-qubit gate fidelities are improving rapidly but remain below those of trapped ions and the best superconducting systems, currently around 99.5%. Mid-circuit measurement—essential for real-time error correction—is still under development. However, QuEra’s 2025 publications on algorithmic fault-tolerance[7] techniques claiming up to 100× reduction in error correction overhead have generated significant excitement and could be transformative if validated at scale.

Infleqtion joins the public market (February 2026): In February 2026 a fourth neutral-atom company became publicly tradeable — the first new public-market quantum pure-play since 2022, and notable because a meaningful fraction of its revenue comes from quantum sensing, not computing. Infleqtion (NYSE: INFQ), spun out of ColdQuanta and headquartered in Boulder, Colorado, closed its SPAC merger with Churchill Capital Corp X on February 13, 2026 (NYSE trading began February 17) at a $1.8 billion transaction value and over $540 million in gross proceeds; as of April 15, 2026, INFQ trades at roughly $14 / share on ~216 million shares outstanding, implying an approximately $3.0 billion market cap[18]. Its Sqale platform reports best-case 99.73% two-qubit gate fidelity and approximately 2.8-second ground-state coherence (with gate-operational coherence shorter by orders of magnitude during Rydberg gates, as across all neutral-atom platforms); these figures are drawn from company disclosures and the UK NQCC announcement rather than a single peer-reviewed experiment. Comparable peer-reviewed neutral-atom two-qubit fidelities (QuEra/Harvard, Pasqal) remain in the 99.5–99.7% band. A 100-qubit Sqale system was delivered to the UK's National Quantum Computing Centre in late 2025 and publicly announced March 16, 2026 — the UK's only operational 100-qubit machine[19]. CEO Matthew Kinsella's roadmap targets 30+ logical qubits by end of 2026, 100+ by 2028, and 1,000+ by 2030. Infleqtion disclosed $32.5 million of 2025 revenue and guided $40 million for 2026, with a meaningful portion coming from quantum sensing — Tiqker optical atomic clocks, deployed on a Royal Navy autonomous submarine in October 2025 for GPS-denied navigation — rather than quantum computing[20]. Infleqtion did not advance to DARPA QBI Stage B in November 2025; QBI Stage B is one federal evaluation track among several (HARQ and DoE/NSA programs remain open), so absence from Stage B is a data point, not a verdict.

Photonic Qubits

Photonic quantum computers, pursued by PsiQuantum and Xanadu, encode quantum information in photons—particles of light. Their theoretical advantages are compelling: photons do not decohere in transit (eliminating the coherence-time problem entirely), they operate at room temperature (no dilution refrigerators), and they naturally integrate with telecommunications infrastructure for distributed quantum computing.

Photonic quantum computers use particles of light instead of atoms or circuits. The huge upside: light doesn't "forget" its quantum state, and you don't need giant freezers. The huge downside: photons are extremely hard to make interact with each other, and interaction is exactly what computation requires. PsiQuantum, the world’s most funded quantum startup[8] ($1 billion raised in September 2025 at a $7 billion valuation, with additional government backing from Australia), unveiled a photonic processor in February 2025. The company’s strategy bypasses near-term noisy intermediate-scale quantum (NISQ) applications entirely, aiming directly at a large-scale fault-tolerant machine fabricated in conventional semiconductor fabs. Microsoft and PsiQuantum are the two companies[9] that have advanced to the final stage of DARPA's US2QC program (the precursor to the Quantum Benchmarking Initiative).

The fundamental challenge for photonic quantum computing is deterministic two-qubit gates. Photons do not naturally interact with each other, making the entangling operations central to quantum computation extremely difficult. Photonic approaches instead rely on measurement-based quantum computing and fusion operations, which are probabilistic. Overcoming the resulting photon loss and inefficiency remains the core engineering challenge.

Topological Qubits

Microsoft’s approach is the most ambitious and the most contested. Topological qubits would encode quantum information in Majorana quasiparticles—exotic collective states of electrons predicted to exist in certain superconducting nanowires. If they work as theorized, topological qubits would be intrinsically protected from local noise, offering built-in error correction and potentially requiring far fewer physical qubits per logical qubit than any other architecture.

Most approaches accept that qubits will be noisy and try to correct errors after the fact. This approach is different: build qubits where information is inherently immune to noise. It's the difference between constantly repairing a sandcastle versus building with concrete that doesn't wash away—a beautiful idea, but proving it works has been extremely difficult. Microsoft announced Majorana 1 in February 2025[10], claiming the creation of the first "topoconductor" and an eight-qubit chip with a "Topological Core" architecture designed to scale to one million qubits. The accompanying Nature paper described interferometric parity measurement in InAs-Al hybrid devices—a prerequisite for topological qubit operation.

However, the Nature editorial team itself noted that the peer-reviewed results “do not represent evidence for the presence of Majorana zero modes[11].” At the APS Global Physics Summit in March 2025, Microsoft’s Chetan Nayak presented additional data to a packed and largely skeptical audience. A preprint by Henry Legg of the University of St Andrews[12] argued that Microsoft’s Topological Gap Protocol—the test used to identify Majoranas—is flawed and susceptible to false positives. A subsequent paper from the University of New South Wales suggested[13] that the decoherence time of Majorana qubits may be too short to support computation. Microsoft vigorously disputes both critiques and says it has made significant additional progress since the Nature paper was submitted in March 2024.

The honest assessment: topological quantum computing remains the highest-risk, highest-reward approach. If Microsoft is right, it could leapfrog all other architectures. If the skeptics are right, the company has spent two decades pursuing a physical phenomenon that may not be practically exploitable. Multiple physicists have noted that even if topological qubits work, the approach is “probably 20-30 years behind[14] the other platforms” (Winfried Hensinger, University of Sussex). DARPA’s advancement of Microsoft to the final stage of US2QC, however, suggests that government evaluators believe the approach has at least a plausible path forward.

Silicon Spin Qubits

Silicon spin qubits, pursued by Intel (Tunnel Falls, 12 qubits), Diraq, and Silicon Quantum Computing, encode quantum information in the spin states of individual electrons or nuclei in silicon. Their potential advantage is enormous: full compatibility with existing CMOS semiconductor fabrication, which could enable mass production using the same factories that make conventional computer chips.

Silicon spin qubits are the "what if we could just use regular chip factories?" approach. If it works, quantum processors could be mass-manufactured on the same lines that make phone chips—an unbeatable cost advantage. The problem: it's the youngest and smallest approach, but DARPA thinks it's worth watching.

The approach is the earliest-stage among commercially pursued modalities, with the smallest demonstrated qubit counts and fidelities that are still climbing toward competitive levels (approximately 99%+). However, three silicon-focused companies (Diraq, Quantum Motion, Silicon Quantum Computing) advanced to Stage B of DARPA’s QBI, suggesting that evaluators see a credible scaling path. If silicon qubits can close the fidelity gap, their CMOS compatibility could make them the long-term winner for mass-manufactured quantum processors.

Each approach leads on different axes. Scores are qualitative assessments (1=weakest, 5=strongest).

1.2 The Error Correction Revolution

Error correction is the most important technical story in quantum computing today. It deserves extended treatment because it is the key that unlocks everything else.

The core problem is straightforward: individual qubits are noisy. Even the best physical qubits in the world—Quantinuum’s barium ions at 99.921% two-qubit gate fidelity—make errors roughly once every 1,200 operations. For commercially useful quantum algorithms like simulating a drug molecule or factoring a large number, you need billions or trillions of operations to complete reliably. At current physical error rates, the computation would be corrupted long before it finished.

Even the best qubit makes mistakes. Solving real-world problems requires billions of steps, so without a proofreading system the answer would be gibberish. Error correction is that proofreading system—it catches and fixes mistakes as they happen. Quantum error correction (QEC) solves this by encoding one “logical” qubit across many “physical” qubits, using redundancy and continuous error detection to suppress the logical error rate exponentially. The catch: this only works if the physical error rate is below a critical threshold. If physical qubits are too noisy, adding more of them makes things worse, not better.

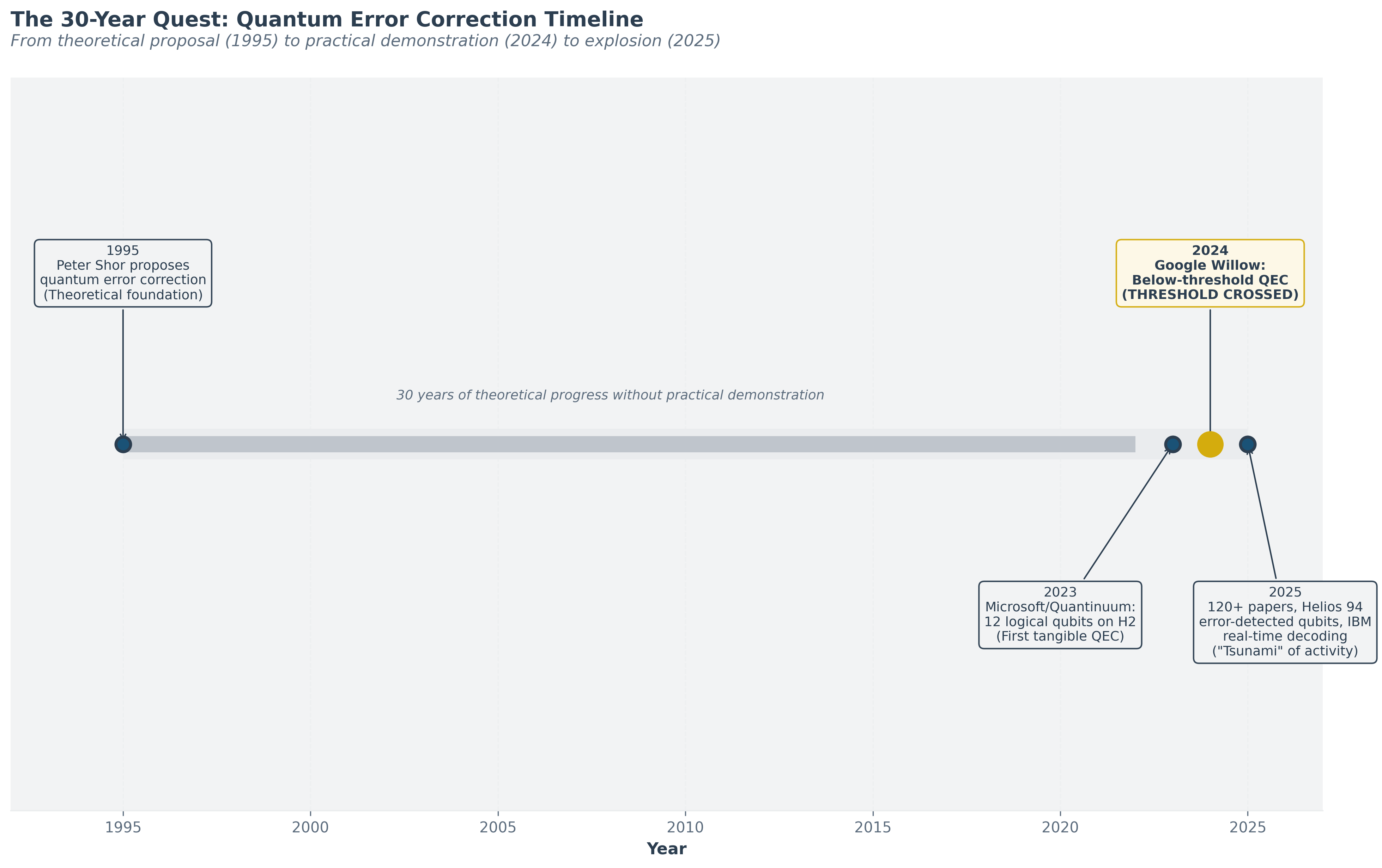

The history of QEC is a story of a thirty-year quest to cross that threshold:

1995: Peter Shor proposes quantum error correction. The theoretical possibility is established, but the required physical qubit quality seems impossibly far away. 1995–2022: Steady progress in qubit quality, but no system demonstrates below-threshold error correction. Scaling up always degrades performance. Skeptics argue that practical QEC may be physically impossible at achievable noise levels.

2023: Microsoft and Quantinuum demonstrate 12 logical qubits on 56 physical qubits using the H2 trapped-ion system. First tangible evidence that practical QEC is within reach.

December 2024: Google Willow achieves below-threshold error correction. Published in Nature. This is the moment the threshold is crossed for the first time. The logical error rate halves with each increase in code distance (3×3 → 5×5 → 7×7). The logical qubit lifetime exceeds the best physical qubit by 2.4×. The error suppression factor is Λ = 2.14 ± 0.02 per code distance step.

From theoretical proposal (1995) to practical demonstration (2024) to explosion (2025). Helios demonstrated 94 error-detected qubits (48 via concatenation).

2025: An explosion of progress. In the first ten months of the year, over 120 peer-reviewed papers on quantum error correction are published—described by industry observers as a "tsunami" of activity. Quantinuum's Helios achieves 94 error-detected logical qubits (using [[4,2,2]] detection code) and 48 logical qubits at a 2:1 encoding ratio via code concatenation. IBM demonstrates all hardware elements of fault-tolerant computing with its experimental Loon processor and achieves real-time decoding of qLDPC codes in under 480 nanoseconds—a 10× speedup over previous leading approaches, delivered a full year ahead of schedule. QuEra publishes algorithmic fault-tolerance techniques claiming up to 100× overhead reduction. DARPA QBI: The U.S. government’s Quantum Benchmarking Initiative[9], launched in July 2024, aims to determine whether utility-scale quantum computing is achievable by 2033. In April 2025, approximately eighteen companies entered Stage A. By November 2025, eleven companies across five modalities advanced to Stage B for year-long R&D evaluation. Microsoft and PsiQuantum advanced to the final stage of the related US2QC program.

Scientists proposed a way to make quantum computers reliable decades ago, but it took until recently for anyone to prove it actually works in a real machine. Since that proof, progress has exploded. The government is now actively evaluating whether a practical quantum computer can be built within a decade.

The Path Forward: From 48 to 10,000 Logical Qubits

The key question is: what is the path from today’s roughly 48-100 logical qubits to the 1,000-10,000+ logical qubits at error rates of 10⁻⁶ to 10⁻¹² needed for commercially transformative algorithms?

Today's best quantum computers have a modest number of reliable qubits. For problems like drug design, we need orders of magnitude more. The gap is large, but multiple approaches to closing it are being pursued simultaneously, each showing promising early results.

The answer depends critically on which error correction codes are used and how efficiently they can be implemented:

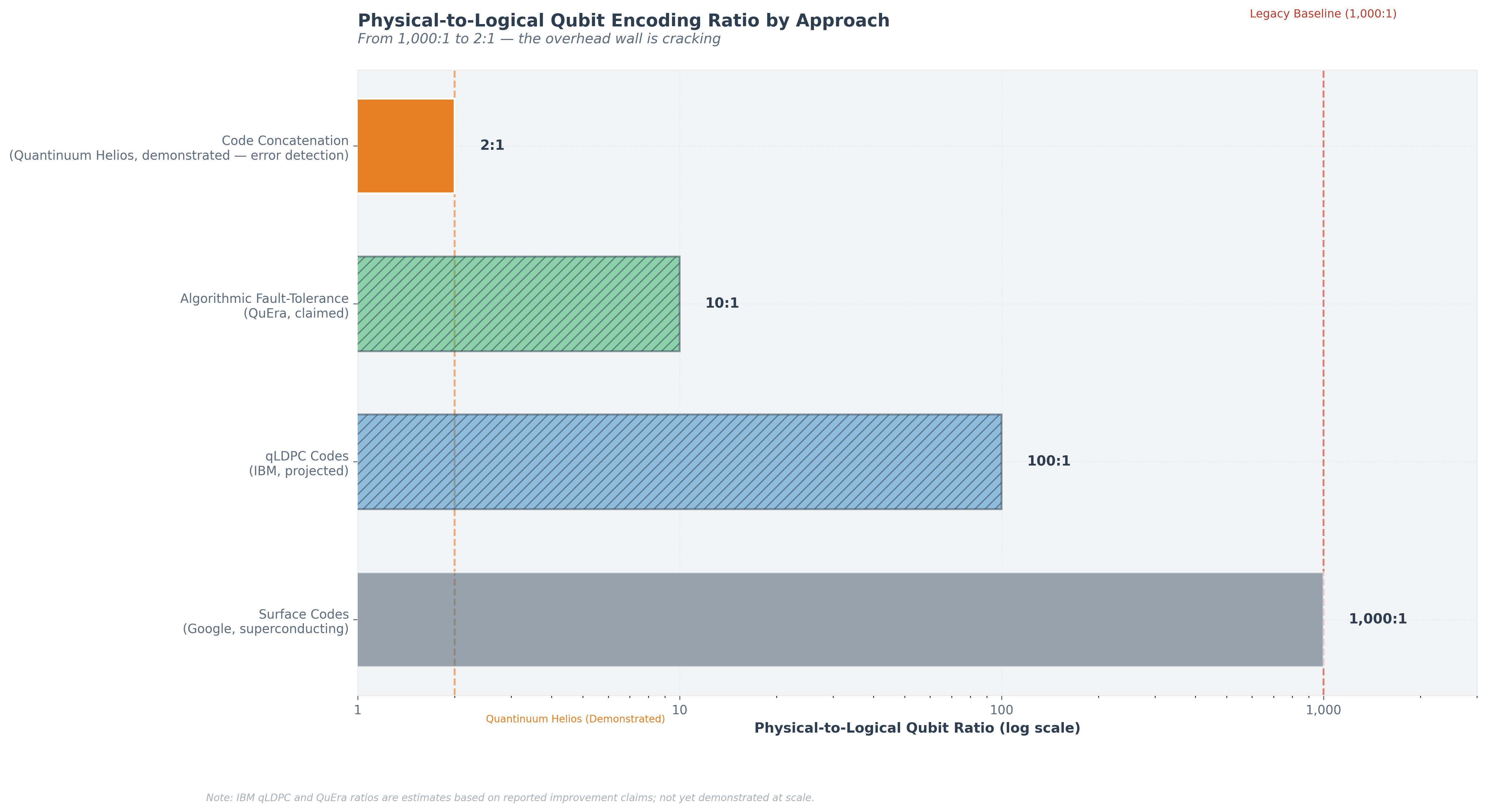

Surface codes (the standard workhorse, used by Google on Willow): Relatively simple to implement on nearest-neighbor grids, but very expensive in overhead. At current fidelities, roughly 1,000 physical qubits are required per high-fidelity logical qubit. Google’s Nature paper suggests that a distance-27 surface code (approximately 1,457 physical qubits) could achieve a 10⁻⁶ logical error rate. A 1,000-logical-qubit machine would then require roughly 1.5 million physical qubits —an enormous engineering challenge.

qLDPC codes (quantum Low-Density Parity Check, IBM's bet): These newer codes promise dramatically better encoding rates—more logical qubits per physical qubit —but require higher connectivity than nearest-neighbor grids, which is why IBM developed c-couplers for the Loon processor. IBM's "gross code" has attracted over 200 citations in its first year. If qLDPC codes work at scale, the path to thousands of logical qubits becomes much more feasible.

Code concatenation (Quantinuum's approach): By layering codes—combining the [[4,2,2]] Iceberg code with symplectic double codes—Quantinuum achieved the 2:1 encoding ratio on Helios. This approach leverages all-to-all connectivity inherent in trapped-ion architectures. An important caveat: the [[4,2,2]] code is a distance-2 detection code—it identifies errors through post-selection rather than correcting them. Scaling to fault-tolerant computation will require higher-distance codes that have not yet been demonstrated at this encoding efficiency. If the concatenation approach scales to higher-distance codes, it could offer the most qubit-efficient path to fault tolerance.

Genon codes and SWAP-transversal gates (also Quantinuum): Recent work from Quantinuum QEC researchers introduced genon codes that exploit the QCCD architecture’s qubit-movement capabilities, performing logical gates by physically relabeling qubits—essentially “for free” in a system where ions can move.

From 1,000:1 to 2:1 — the overhead wall is cracking. Quantinuum's 2:1 uses error detection (post-selection), not full correction.

Three teams are attacking the efficiency problem from three angles: Google is refining surface codes (the brute-force method), IBM is developing qLDPC codes (a fundamentally different approach that needs less hardware), and Quantinuum is layering code concatenation to squeeze maximum efficiency. Any one of these could break the overhead problem wide open.

1.3 The Trendlines

Five trendlines define the trajectory of quantum computing. Each tells a different part of the story.

Trendline 1: Gate Fidelity Over Time

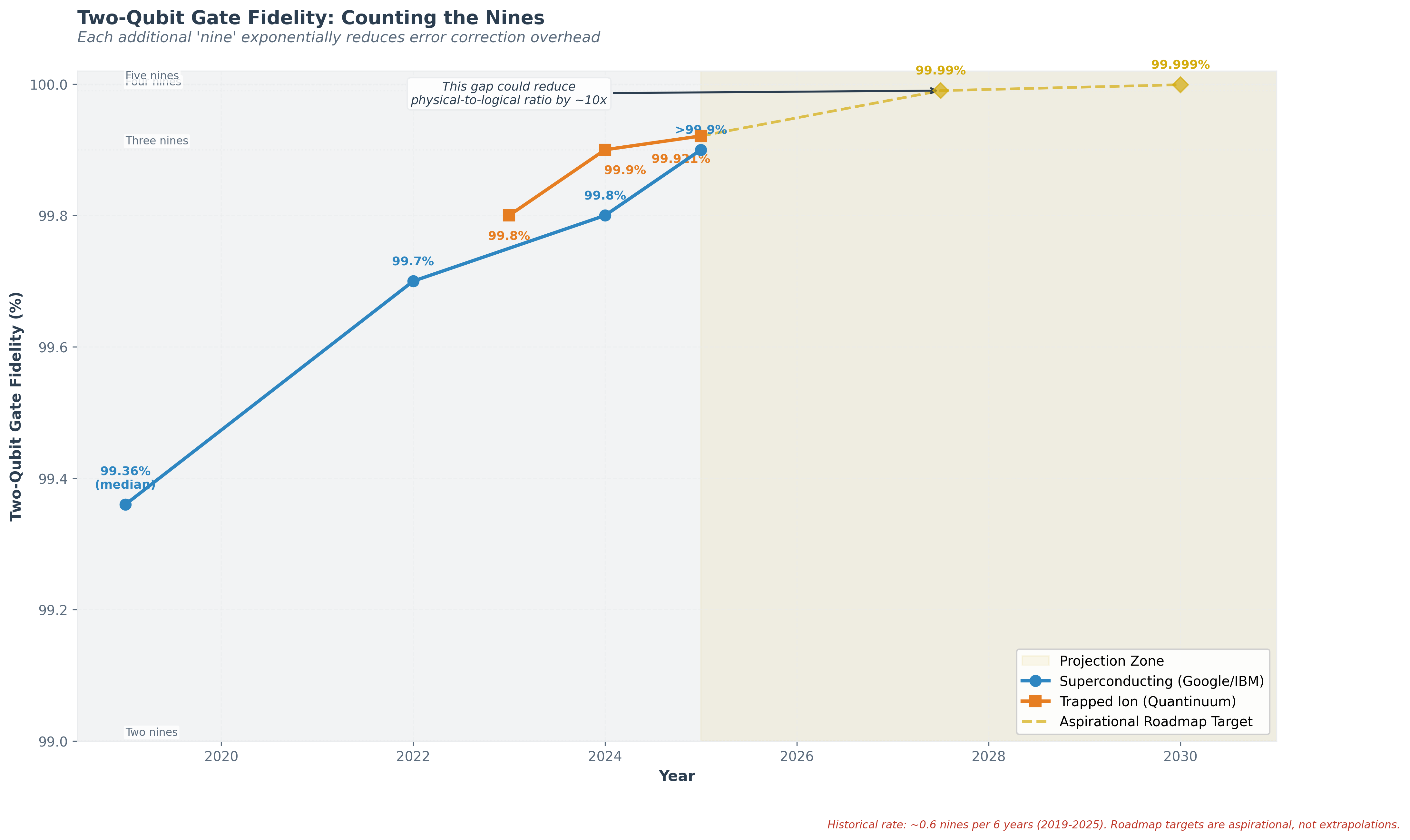

This is the most important trendline in quantum computing. Each additional “nine” of gate fidelity (99.9% → 99.99%) exponentially reduces the error correction overhead required, which is why counting the nines is the central metric of this report. Best reported two-qubit gate fidelities, by year and platform:

2019: ~99.5% (Google Sycamore, superconducting, 53 qubits)

2022: ~99.7% (various platforms, incremental improvement)

2023: ~99.8% (Quantinuum H2, trapped ion, 56 qubits)

2024: ~99.8% (Google Willow, superconducting, 105 qubits); ~99.9% (Quantinuum H2 upgraded)

2025: ~99.921% (Quantinuum Helios, trapped ion, 98 qubits); >99.9% (IBM Heron Rev 3, superconducting, 57+ coupler pairs)

Industry roadmap targets: 99.99% (“four nines”) by ~2027–2028; 99.999% (“five nines") by ~2030

The historical rate of improvement, based on the data above, has been approximately 0.6 additional nines over six years (from ~99.5% in 2019 to ~99.921% in 2025 for the leading platform)—slower than one nine per 3-4 years. Industry roadmaps target four nines (99.99%) by ~2027–2028, but these are aspirational goals, not extrapolations from demonstrated rates.

Moreover, the jump from three nines to four nines faces qualitatively different error sources (correlated errors, leakage, cosmic ray events) that may not yield to the same techniques that achieved the first three nines. Each additional nine, if achieved, has outsized impact due to the exponential relationship between fidelity and error correction overhead: going from three nines (99.9%) to four nines (99.99%) could reduce the physical-to-logical qubit ratio by an order of magnitude for surface codes.

Accuracy has been improving, but each additional step gets harder because new types of errors emerge at higher levels of precision. If the next milestone is reached, the math means it could cut the hardware needed by a factor of ten—dramatically changing the economics. But reaching it is not guaranteed on any particular timeline.

Each additional 'nine' exponentially reduces error correction overhead. Aspirational roadmap targets shown. Historical rate ~0.6 nines per 6 years.

Trendline 2: Logical Qubit Count Over Time

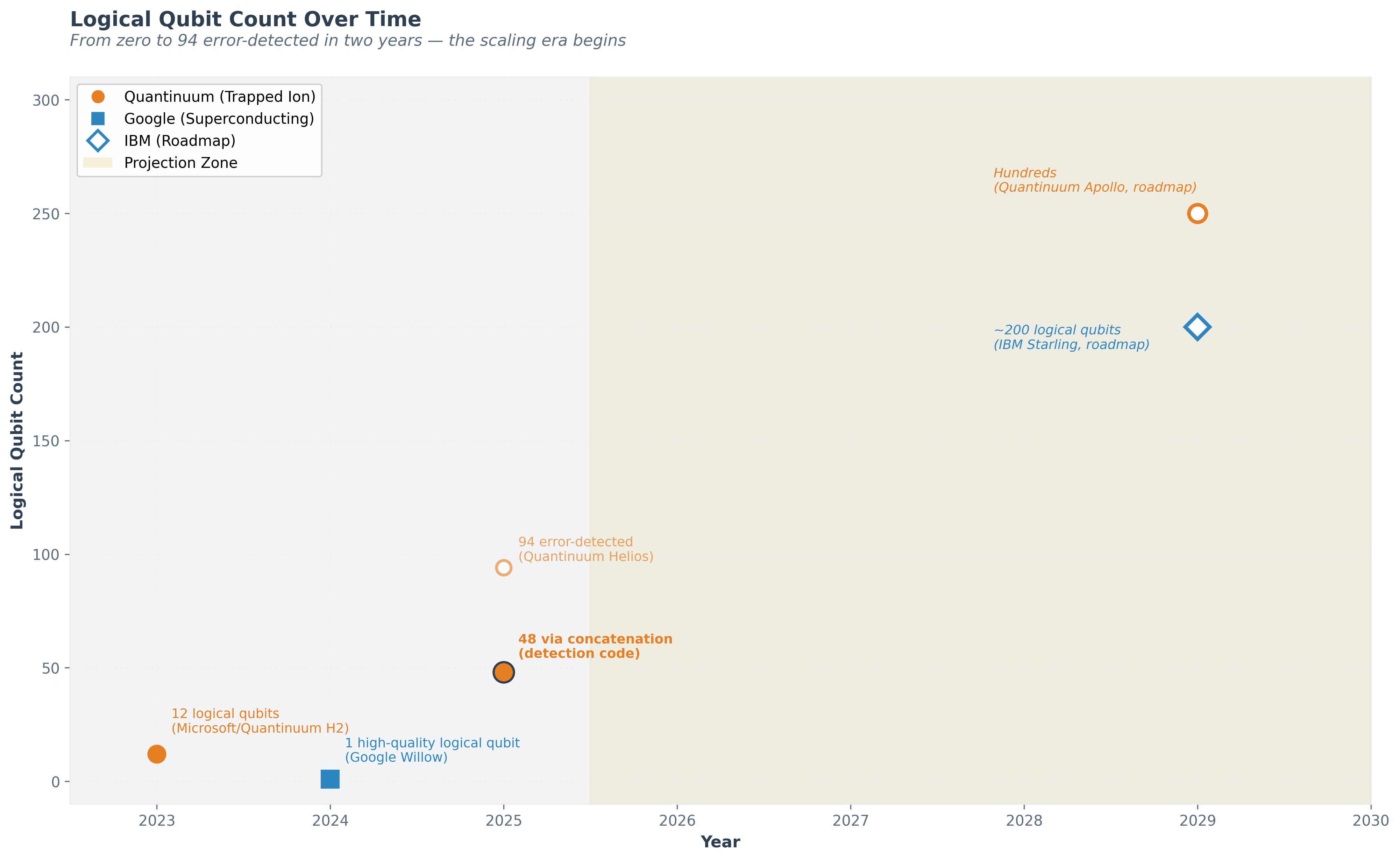

This is the newest trendline and the one most directly predictive of when quantum computers become commercially useful:

2023: First demonstrations, 3-12 logical qubits (Microsoft/Quantinuum on H2)

2024: Google Willow distance-7 surface code (1 high-quality logical qubit); multiple demonstrations across platforms

2025: Quantinuum Helios: 94 error-detected logical qubits (using [[4,2,2]] detection code with post-selection); 48 logical qubits at 2:1 encoding ratio via code concatenation (note: detection, not full error correction). IBM Loon: demonstrated all hardware elements for fault-tolerant computing.

Roadmap targets:

- Quantinuum Apollo: hundreds of logical qubits by 2029

- IBM Starling: 200 logical qubits running 100 million gates by 2029

- Google: error-corrected useful computation by end of decade

94 error-detected (48 via concatenation) demonstrated in 2025. Hollow markers represent roadmap targets.

The trajectory from zero to ninety-four error-detected logical qubits in roughly two years is striking, though it is important to distinguish between error detection (Helios's current capability) and the full error correction needed for fault-tolerant computation. If the rate of progress holds—a significant if—hundreds of logical qubits by 2028–2029 is plausible, and thousands by the early 2030s.

Trendline 3: Investment

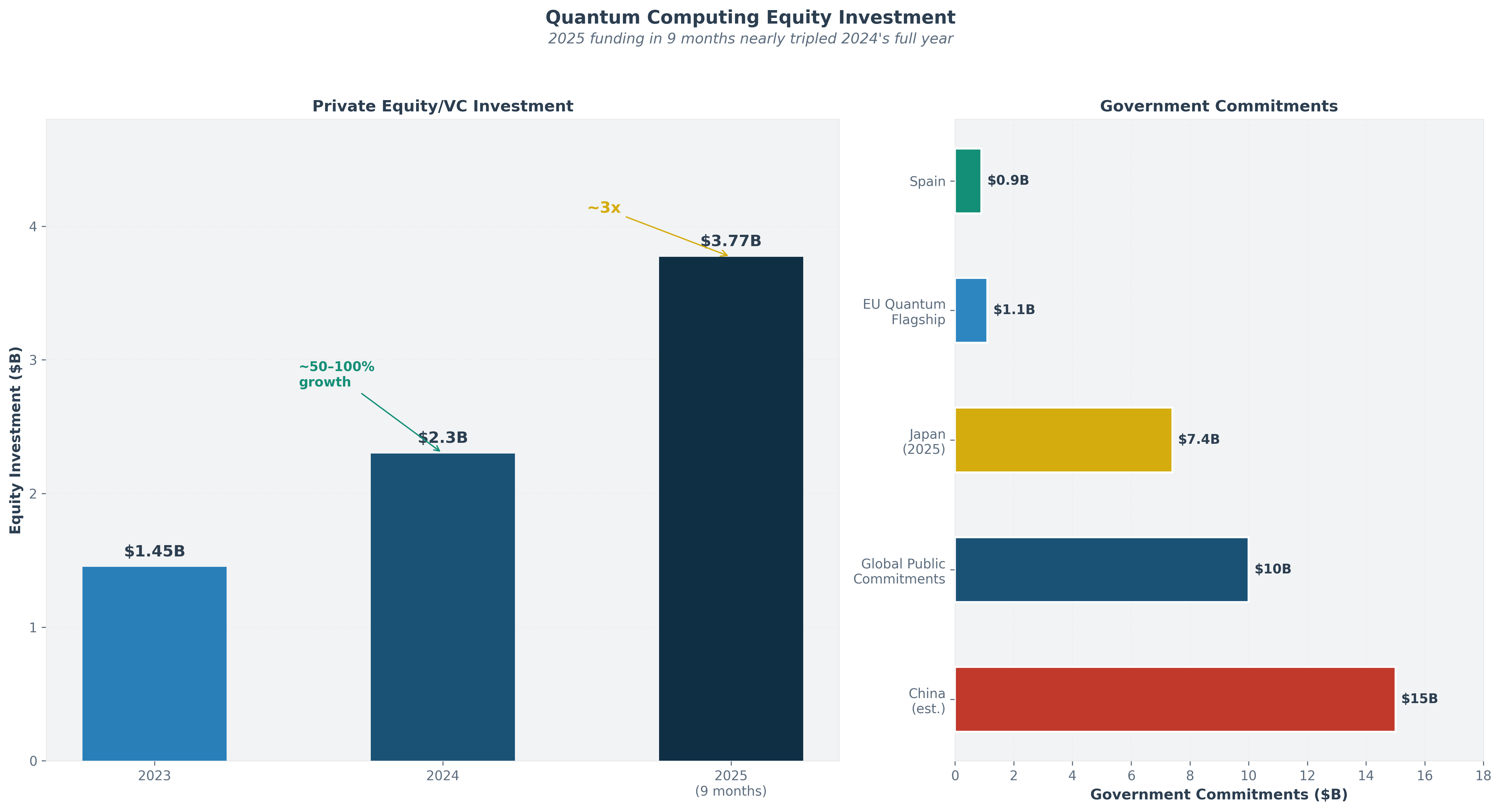

Capital flows into quantum computing have inflected sharply upward:

2023: ~$1.3-1.6 billion in venture capital and private equity globally

2024: ~$2.0-2.6 billion (50-58% increase year-over-year)

2025 (first 9 months): $3.77 billion in total equity funding (nearly 3× the 2024 full-year figure)

Government investment has also surged. By April 2025, global public quantum commitments exceeded $10 billion, spiked by Japan’s $7.4 billion announcement and Spain’s €808 million investment. The United States leads in private-sector diversity and VC funding. China is estimated to have invested approximately $15 billion (exact figures are not officially confirmed). The EU’s Quantum Flagship has committed over €1 billion.

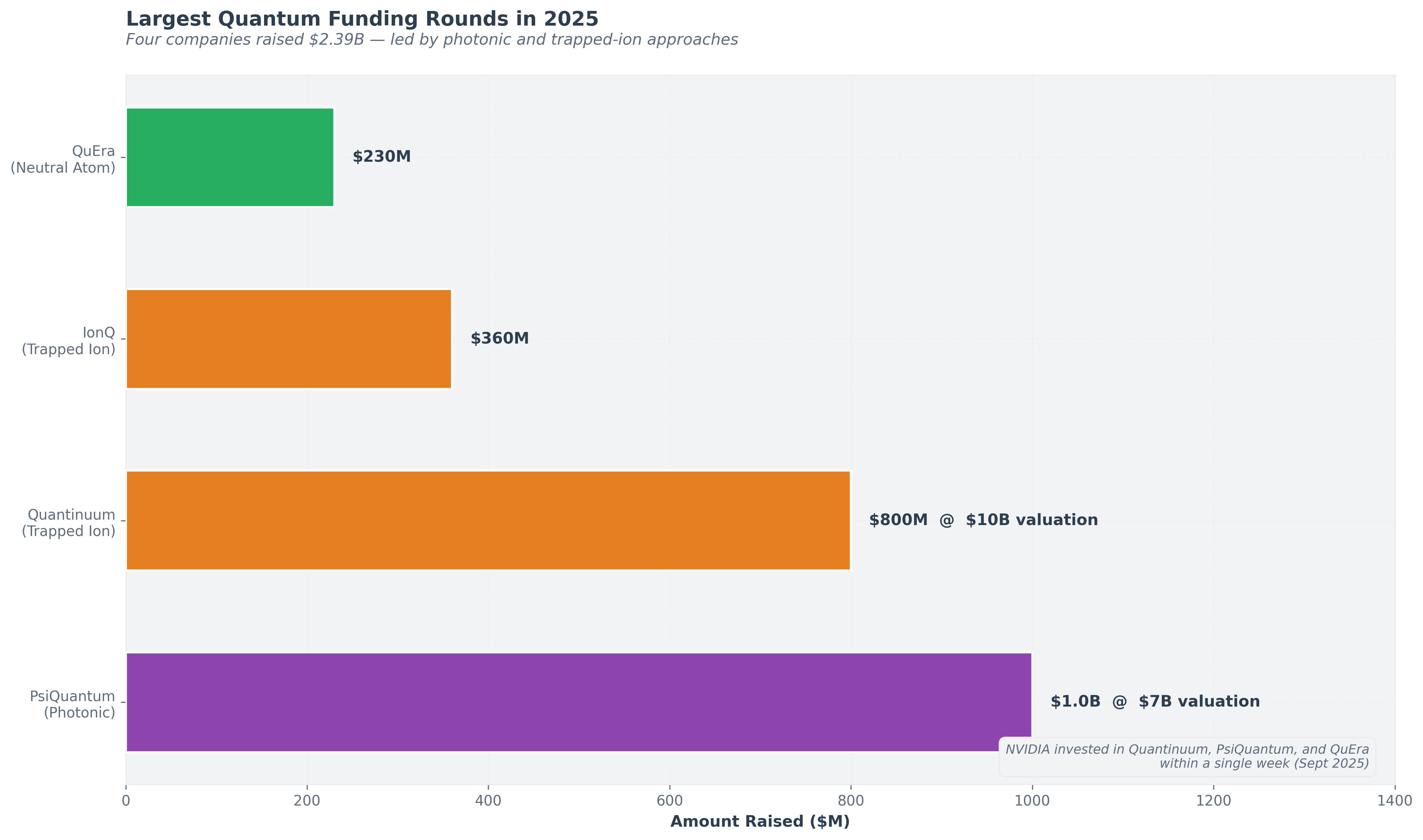

Notable 2025 funding rounds:

- PsiQuantum ($1 billion, $7 billion valuation, September 2025)

- Quantinuum ($800 million, $10 billion valuation)

- IonQ ($360 million)

- QuEra ($230 million)

2025 funding in 9 months nearly tripled 2024's full year.

Four companies raised $2.39B — led by photonic and trapped-ion approaches.

NVIDIA emerged as a major quantum investor in September 2025, backing Quantinuum, PsiQuantum, and QuEra within a single week. NVIDIA's ecosystem play widened further in November 2025 with NVQLink—an open interconnect standard co-announced with 17 QPU vendors, five control-electronics vendors, and nine U.S. national laboratories—and in the same month NVIDIA added 15 international supercomputing centers (including RIKEN, Jülich, KAUST, Pawsey, CINECA, and TII) as NVQLink deployment sites, disclosing 400 Gb/s GPU–QPU throughput at sub-4-microsecond latency and a Quantinuum decoder reaction time of 67 microseconds, roughly 32× faster than the initial spec[25].

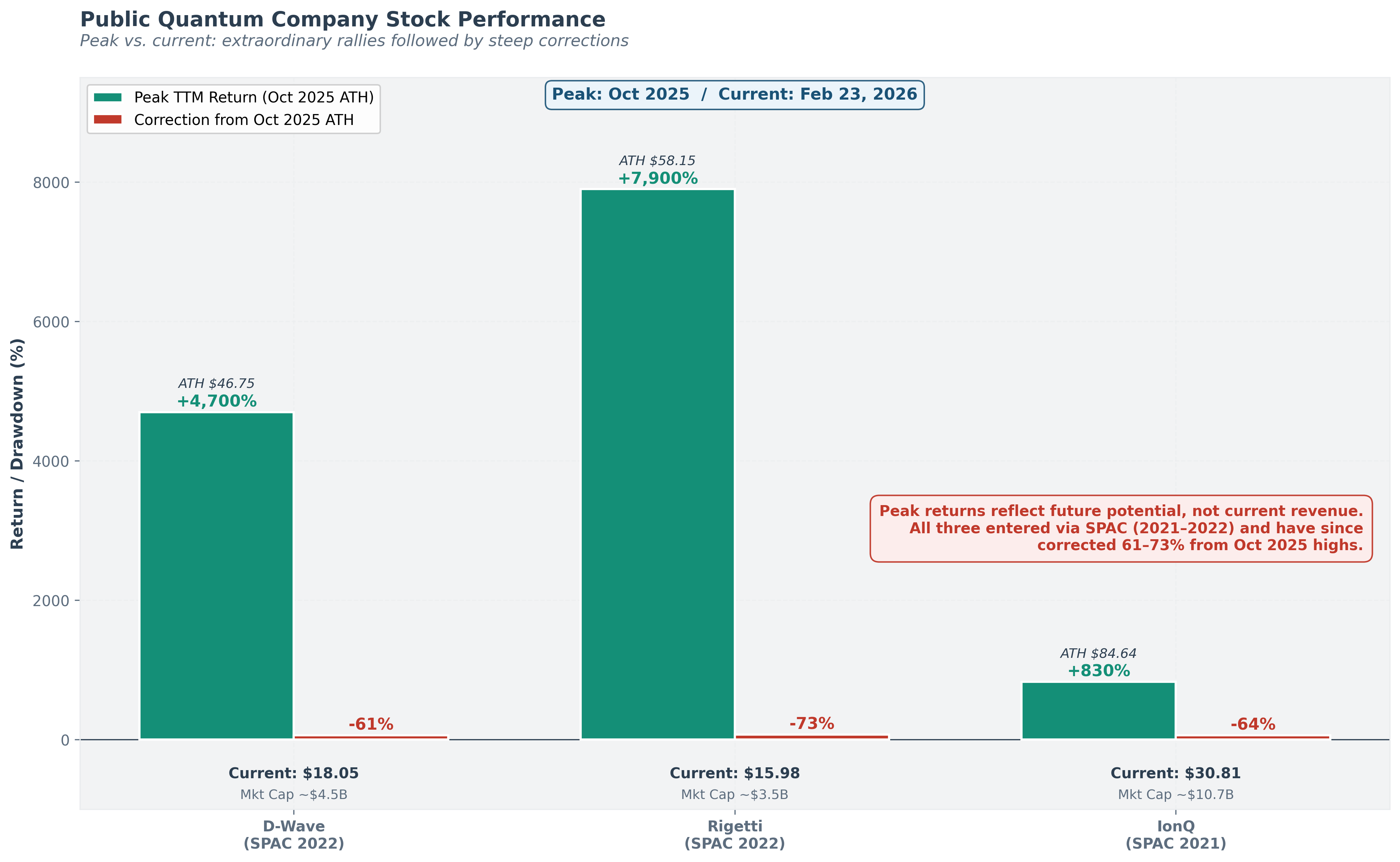

The money is real and accelerating—recent investment has nearly tripled year over year, and governments worldwide are committing billions. When both Wall Street and the military are this interested simultaneously, it usually means the technology has crossed from "science fiction" to "serious engineering project." Public quantum company stock performance has been extraordinary if volatile: D-Wave rose approximately 3,700%, Rigetti approximately 5,700%, and IonQ approximately 700% on a trailing twelve-month basis as of early 2025. Important context: all three companies entered public markets via SPAC mergers in 2021–2022 (IonQ October 2021, Rigetti March 2022, D-Wave August 2022), a period of peak SPAC exuberance, and their percentage gains are measured from post-SPAC lows. At current prices, IonQ trades at a price-to-sales ratio exceeding 500× (on approximately $43 million in annual revenue against a ~$24.5 billion market capitalization). For comparison, even the most optimistically valued AI companies trade at 30-60× sales. Market capitalizations: IonQ ~$24.5 billion, Rigetti ~$13 billion (as of October 2025). These valuations are largely based on future potential rather than current revenue.

Public-market map expanded sharply in Q1 2026: The universe of publicly traded quantum pure-plays effectively doubled in the first four months of 2026. Rigetti brought its Cepheus-1 108-qubit system live on its cloud platform on April 7, 2026—its first system past 100 qubits, at a reported 99.1% median two-qubit gate fidelity—and announced an $8.4 million order from India's C-DAC for a 108-qubit system in the second half of 2026 (government order subject to delivery milestones and acceptance testing)[21]. Quantinuum filed its S-1 confidentially on January 14, 2026; valuation target and raise size have not been publicly disclosed, though trade-press reporting references a multi-billion-dollar range and a potential 2027 listing[22]. IQM (Finland) announced a SPAC merger with Real Asset Acquisition Corp. (Nasdaq: RAAQ) on February 23, 2026 at a ~$1.8 billion pre-money equity valuation, targeting a Nasdaq listing around June 2026 and reporting $35 million of 2025 revenue with commercial bookings over $100 million—the first European public quantum pure-play[23]. Infleqtion closed its SPAC on February 13, 2026 and began trading on NYSE as INFQ on February 17, 2026 (see Section 1.1)[18]. In mid-April 2026, Mizuho cut price targets on IONQ ($61, from $80), RGTI ($33, from $43), and QBTS ($31, from $40) while maintaining Outperform; consensus coverage is wider (roughly $45–$95 on IONQ, $18–$50 on RGTI, $12–$42 on QBTS pre-drawdown); the sector nonetheless rallied on April 14–15 following IonQ's photonic interconnect and DARPA HARQ news[24].

A strong caution for investors: these stock prices reflect what the market hopes quantum computing will become, not what it earns today. It's like the early internet era—some companies became giants, but most didn't survive even though the technology was real. The path will be volatile, and not every quantum company will make it.

Extraordinary returns reflect future potential, not current revenue. All three companies entered via SPAC mergers (2021–2022). IonQ P/S >500×.

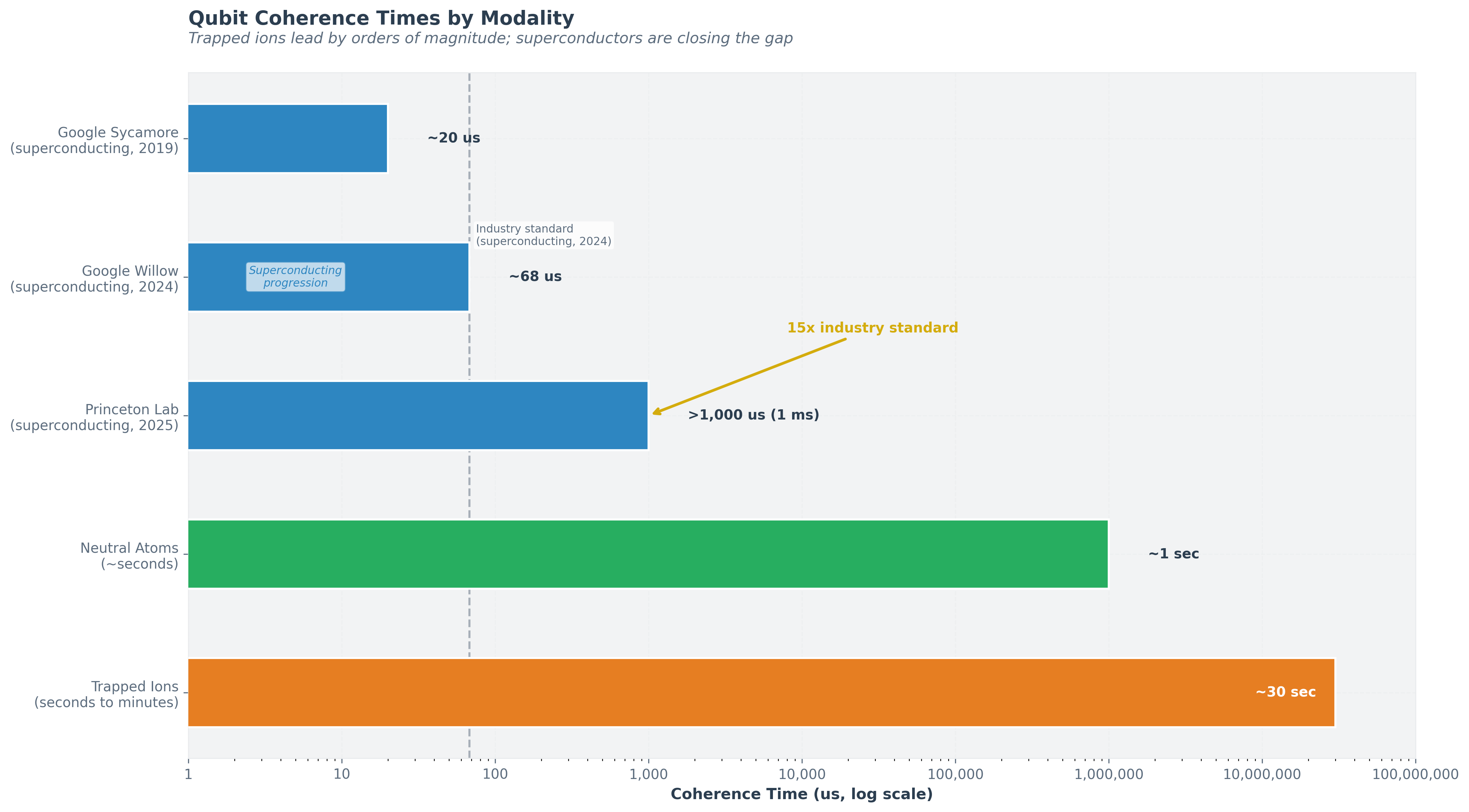

Trendline 4: Coherence Time

Coherence time—how long a qubit retains its quantum information before environmental noise destroys it—has improved steadily across all modalities:

- Superconducting transmons: ~20 μs (Google Sycamore, 2019) → ~68 μs (Google Willow, 2024) → >1 ms (Princeton lab demonstration, November 2025, a 15× improvement over industry standard)

- Trapped ions: Coherence times naturally range from seconds to minutes, a fundamental advantage of the modality.

- Neutral atoms: Ground-state coherence times on the order of seconds. Princeton’s November 2025 breakthrough is particularly significant because it demonstrates that materials science improvements (substituting tantalum for niobium) can yield order-of-magnitude gains in coherence. If similar improvements can be achieved in production-grade systems, it could transform the error correction calculus for superconducting architectures.

Trapped ions lead by orders of magnitude; superconductors are closing the gap.

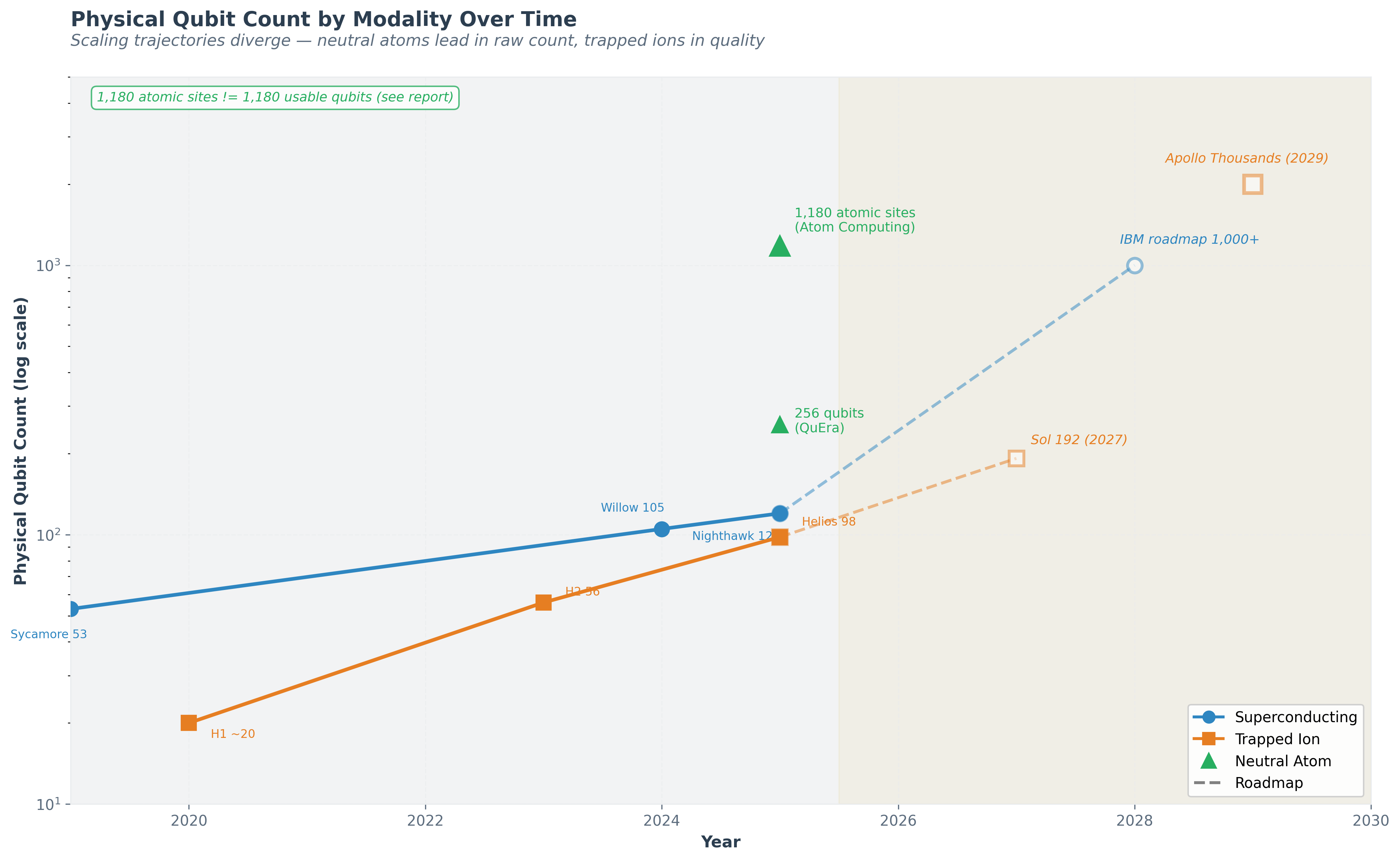

Trendline 5: Physical Qubit Count

Raw qubit count is the least informative metric by itself—what matters is usable qubits with sufficient quality. Nonetheless, the trajectory is worth noting:

- Superconducting: Google Sycamore 53 (2019) → Google Willow 105 (2024) → IBM Nighthawk 120 (2025). IBM's roadmap targets 1,000+ connected qubits via long-range couplers by 2028.

- Trapped Ion: Quantinuum H1 ~20 (2020) → H2 56 (2023) → Helios 98 (2025). Roadmap: Sol 192 (2027), Apollo thousands (2029).

- Neutral Atom: Atom Computing 1,180 sites demonstrated; QuEra 256 qubits.

Headlines love to report raw qubit counts, but raw count alone is like judging a car by its number of wheels. What matters is the combination of quantity, quality, and how well the qubits can communicate with each other.

Holistic Benchmarks: Quantum Volume and CLOPS

Individual metrics—qubit count, gate fidelity, coherence time—each tell only part of the story. Two holistic benchmarks attempt to capture system-level performance: Quantum Volume (QV) measures the largest random circuit a quantum computer can execute reliably, capturing the interplay of qubit count, connectivity, gate fidelity, and crosstalk in a single number. Quantinuum's H2 system holds the current record at QV 2^20 (approximately 1,048,576)—several orders of magnitude above competing platforms. IBM's Eagle-class processors have achieved QV 2^7 (128). QV provides a useful cross-platform comparison but has limitations: it measures a specific random circuit structure, not necessarily performance on practical algorithms. Circuit Layer Operations Per Second (CLOPS) measures how many quantum circuit layers a system can execute per second, capturing not just gate speed but also the classical control overhead, compilation time, and data transfer latency that determine real-world throughput. IBM's systems lead on CLOPS due to fast superconducting gate times and optimized classical control infrastructure. Together, QV and CLOPS provide a more complete picture than any single metric. Their omission from most quantum computing discussions—including, until this section, this report—reflects the field's tendency to emphasize whichever individual metric a given platform leads on.

Think of it this way: individual specs are like a car's horsepower, steering precision, and fuel tank size. Holistic benchmarks are like lap times—they measure how the whole car performs on the track, not just one component. No single number tells you everything, which is why serious evaluation requires looking at multiple metrics together.

Growth rates vary by modality and are not following a simple exponential law. Qubit scaling is qualitatively harder than transistor scaling—each added qubit must maintain coherence, fidelity, and connectivity with all others, which creates engineering challenges that grow non-linearly.

Scaling trajectories diverge — neutral atoms lead in raw count, trapped ions in quality. Dashed lines represent roadmap targets.

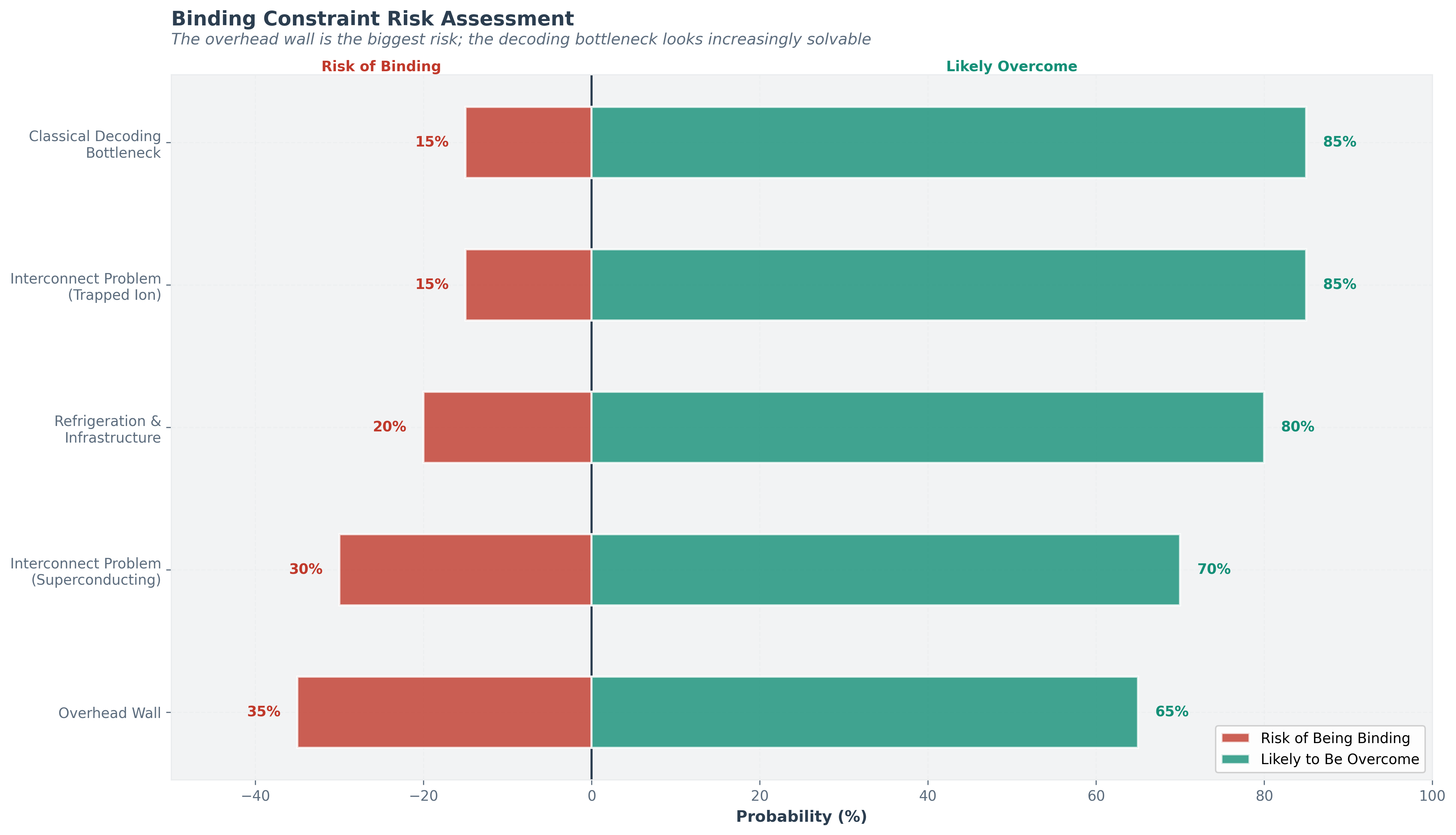

1.4 Quantum’s Binding Constraints: The Walls

Every technology hits binding constraints that can stall progress. Quantum computing has its own walls. Understanding them is essential for calibrated forecasting.

Every technology hits bottlenecks that could stall progress. Quantum computing has four. This section describes each one honestly: what it is, why it might be solvable, and the risk that it isn't.

Wall 1: The Overhead Wall

The single biggest barrier to practical quantum computing. For superconducting systems using surface codes, the physical-to-logical qubit ratio is approximately 1,000:1 for high-fidelity computation. This means a commercially useful 1,000-logical-qubit machine would require roughly one million physical qubits—vastly beyond anything built or planned for the near term.

Why insiders are bullish it can be broken: Three developments are converging. First, Quantinuum has demonstrated a 2:1 encoding ratio in trapped ions, proving that much more efficient codes exist and work. Second, IBM’s qLDPC codes promise dramatically better encoding rates for superconducting systems, and the Loon processor demonstrates the hardware needed to implement them. Third, QuEra’s algorithmic fault-tolerance techniques claim up to 100× overhead reduction. If any of these approaches scales, the overhead wall breaks.

Historical analogy: In classical computing, the challenge of reliable transistor scaling was met not by making individual transistors perfect, but by developing error-correcting codes (ECC memory, parity bits) and redundant architectures. The quantum field is following an analogous path, but the physics is harder.

Calibrated confidence: The overhead wall is being actively undermined from multiple directions simultaneously. The most likely outcome is that overhead ratios decline to 10:1-100:1 within this decade through a combination of improved fidelities and better codes, making machines of hundreds of logical qubits feasible. Achieving the 1,000:1 ratio assumed in worst-case analyses is increasingly unlikely. Probability that the wall is substantially broken by 2030: ~60-70%.

Wall 2: The Classical Decoding Bottleneck

Error correction requires a fast classical computer to decode error syndromes in real time. If the decoder cannot keep up with the quantum processor’s clock speed, errors accumulate faster than they can be corrected. This is particularly challenging for superconducting systems, where gate times are nanoseconds.

While the quantum computer runs, a regular computer has to watch for errors and fix them in real time. If this "umpire" is too slow to call the plays, the game falls apart. Recent breakthroughs have made the umpire dramatically faster, and this wall is looking increasingly conquerable.

Current state: IBM achieved real-time qLDPC decoding[4] in under 480 nanoseconds using an AMD FPGA—a 10× speedup over previous approaches, delivered a year ahead of schedule. Quantinuum integrates NVIDIA Grace Hopper GPUs into the Helios control engine for real-time error decoding, achieving a 3%+ improvement in logical fidelity. In April 2026, NVIDIA released Ising—an open family of AI models specifically for QEC decoding and qubit calibration (code Apache-2.0; model weights under NVIDIA's Open Model License)—claiming 2.5× faster and 3× more accurate decoding than pyMatching on vendor-disclosed benchmarks (not yet independently replicated) and compressing calibration from days to hours. Launch signatories span every qubit modality, including Infleqtion, IonQ, Atom Computing, IQM, Q-CTRL, Harvard, LBNL, and Sandia[26].

Calibrated confidence: This wall is likely to be overcome. Classical computing power continues to improve, and dedicated decoder ASICs are a natural next step. The decoder problem is a well-defined engineering challenge, not a fundamental physics barrier. Probability of this being a binding constraint by 2030: ~15%.

Wall 3: The Interconnect Problem

Scaling beyond a single quantum chip requires quantum interconnects—the ability to entangle qubits on separate chips and maintain coherence across the connection. This is analogous to the classical networking problem but exponentially harder because quantum information cannot be copied (the no-cloning theorem) and must be transmitted without measurement.

Today's quantum computers are standalone—each chip works in isolation. To build bigger machines, we need to connect chips while preserving quantum properties. It's like moving a soap bubble between rooms without popping it—extraordinarily hard because quantum data cannot be copied for backup. IBM’s roadmap addresses this with modular architectures: Kookaburra (2026) is designed as the first modular quantum processor for logical qubit storage, and Cockatoo (2027) aims to demonstrate entanglement between separate processors. IBM’s 2026 roadmap also now frames Nighthawk as up to three 120-qubit modules, 360 qubits, and 7,500 gates for quantum-plus-HPC advantage candidates[28]. Quantinuum’s QCCD architecture approaches the problem differently, scaling through junctions within a single trap rather than interconnecting separate chips.

Calibrated confidence: Quantum interconnects are an open engineering challenge. Demonstration at production scale is likely three to five years away. This is more likely to slow progress than stop it. Probability of this being a binding constraint: ~30% for superconducting architectures, ~15% for trapped ions (which scale differently).

Wall 4: The Refrigeration and Infrastructure Wall

Superconducting qubits require millikelvin temperatures. Current dilution refrigerators can cool systems of hundreds of qubits. Scaling to millions of qubits would require either vastly larger cryogenic systems or modular approaches with quantum interconnects between refrigerators. Helium-3, the primary coolant for dilution refrigerators, is scarce and geopolitically concentrated.

Some quantum computers need to be colder than outer space, requiring specialized refrigerators that are expensive, complex, and rely on a rare gas. Scaling up means building refrigerators far larger than anything attempted before, or networking many smaller ones together. Other approaches avoid the extreme cold but face their own infrastructure challenges. Trapped ions, neutral atoms, and photonic systems have different but non-trivial infrastructure requirements: ultra-high vacuum, precision laser systems, and specialized optics, respectively. A single Quantinuum Helios system involves a room full of lasers, mirrors, optical fiber, and vacuum equipment.

Calibrated confidence: Infrastructure is a practical bottleneck that will slow deployment but not prevent it. The analogy to early mainframe computing is apt: early quantum computers, like early classical computers, will be large, expensive, room-sized installations accessible primarily via cloud. Probability of this being the binding constraint: ~20%.

The overhead wall is the biggest risk; the decoding bottleneck looks increasingly solvable.

Notes

- Princeton University, transmon qubit coherence time exceeding 1 ms via tantalum fabrication (November 2025). ↩

- Quantinuum, 'Introducing Helios: The World’s Most Advanced Quantum Computer,' quantinuum.com (November 2025). ↩

- Acharya, R. et al., 'Quantum error correction below the surface code threshold,' Nature 638, 920–926 (2025). [link] ↩

- IBM, Quantum Developer Conference announcements: Nighthawk, Loon, and real-time qLDPC decoding (November 2025). ↩

- IonQ/Ansys, reported 12% speedup on medical device simulation using 36-qubit Forte Enterprise system (March 2025). ↩

- Atom Computing, demonstration of 1,180 neutral-atom qubit sites. ↩

- QuEra Computing, algorithmic fault-tolerance techniques for up to 100× error correction overhead reduction (2025). ↩

- PsiQuantum, $1 billion funding round at $7 billion valuation (September 2025). ↩

- DARPA, Quantum Benchmarking Initiative (QBI) Stage A/B announcements (April–November 2025). ↩

- Aghaee, M. et al., 'Interferometric single-shot parity measurement in an InAs–Al hybrid device,' Nature 638, 651–655 (2025). [link] ↩

- APS Physics, 'Microsoft’s Claim of a Topological Qubit Faces Tough Questions,' APS Global Physics Summit (March 2025). ↩

- Legg, H. et al., University of St Andrews, critique of Microsoft’s Topological Gap Protocol (2025). ↩

- University of New South Wales, analysis of Majorana qubit decoherence times (2025). ↩

- Hensinger, W., University of Sussex, quoted on topological qubit timeline (2025). ↩

- Quantinuum researchers demonstrate quantum computations with dozens of protected logical qubits, The Quantum Insider (March 10, 2026). [link] ↩

- IonQ, 'IonQ Achieves Key Photonic Interconnect Milestone, Demonstrating Networked Quantum Systems Using Entanglement,' ionq.com (April 14, 2026). [link] ↩

- DARPA, Heterogeneous Architectures for Quantum (HARQ) program announcement (April 14, 2026); 19 performer teams across 15 organizations; IonQ selection for diamond-NV quantum memory. [link] ↩

- Infleqtion and Churchill Capital Corp X, Business Combination completion press release (February 13, 2026); NYSE trading as INFQ began February 17, 2026. [link] ↩

- Infleqtion, '100-qubit operational quantum computer delivered to UK National Quantum Computing Centre,' infleqtion.com (March 2026); The Quantum Insider, March 16, 2026. [link] ↩

- Infleqtion, '2026 revenue guidance of $40 million' (FY2025 revenue $32.5M, 2026 guidance $40M), investor relations release (April 8, 2026); Royal Navy Tiqker atomic-clock deployment (October 2025). Revenue includes both quantum computing services/hardware and quantum sensing products; a computing-only split has not been publicly disclosed. [link] ↩

- Rigetti Computing, Q4 2025 / FY 2025 financial results and Cepheus-1 108-qubit system launch (April 7, 2026); C-DAC India 108-qubit order disclosure. [link] ↩

- Honeywell / Quantinuum, confidential S-1 filing for proposed IPO of Quantinuum (January 14, 2026). [link] ↩

- IQM Quantum Computers and Real Asset Acquisition Corp. (Nasdaq: RAAQ), SPAC merger announcement at ~$1.8 billion pre-money equity valuation (February 23, 2026), CNBC. [link] ↩

- Mizuho price-target revisions on IonQ, Rigetti, D-Wave (April 2026); sector rally on April 14–15, 2026 coverage (24/7 Wall St). ↩

- NVIDIA, 'Scientific Supercomputing Centers Embrace NVQLink with Grace Blackwell and Quantum Processors,' nvidianews.nvidia.com (November 17, 2025). [link] ↩

- NVIDIA, 'NVIDIA Launches Ising, the World's First Open AI Models to Accelerate the Path to Useful Quantum Computers,' nvidianews.nvidia.com (April 14, 2026). [link] ↩

- IonQ, 'IonQ Publishes Definitive Technical Report, Establishing Its Fault-Tolerant Quantum Computing Trajectory' (April 22, 2026). [link] ↩

- IBM Quantum Roadmap, 2026 strategic milestones. [link] ↩