Chapter II: The Cascade - What Happens When Quantum Gets Useful

When quantum computers become sufficiently powerful-likely in the range of hundreds to thousands of error-corrected logical qubits operating at error rates below 10⁻⁶-several feedback loops could accelerate progress beyond linear extrapolation. Understanding these cascades is essential for forecasting the industry's trajectory in the late 2020s and early 2030s.

The most interesting phase of any technology is when it starts improving itself-cars got better when cars delivered better parts, AI got better when AI designed better chips. Quantum computing could enter the same self-reinforcing cycle.

Feedback Loop 1: Quantum Simulates Materials → Better Qubits → Better Quantum Computers

This is the most direct self-reinforcing cycle. Quantum computers are uniquely suited to simulating quantum systems-the very physics that governs the materials from which qubits are made. Princeton's November 2025 coherence breakthrough came from a materials science insight (replacing niobium with tantalum). Future materials discoveries-new superconducting compounds, better semiconductor heterostructures, novel topological phases-could yield further order-of-magnitude improvements in qubit performance. Today, materials discovery relies on classical simulation (density functional theory, molecular dynamics) supplemented by experiment. These classical methods scale poorly for strongly correlated quantum systems-exactly the systems most relevant to superconductor physics and qubit design. A quantum computer with 1,000+ logical qubits at sufficient fidelity could simulate these systems directly, potentially identifying materials that current methods cannot.

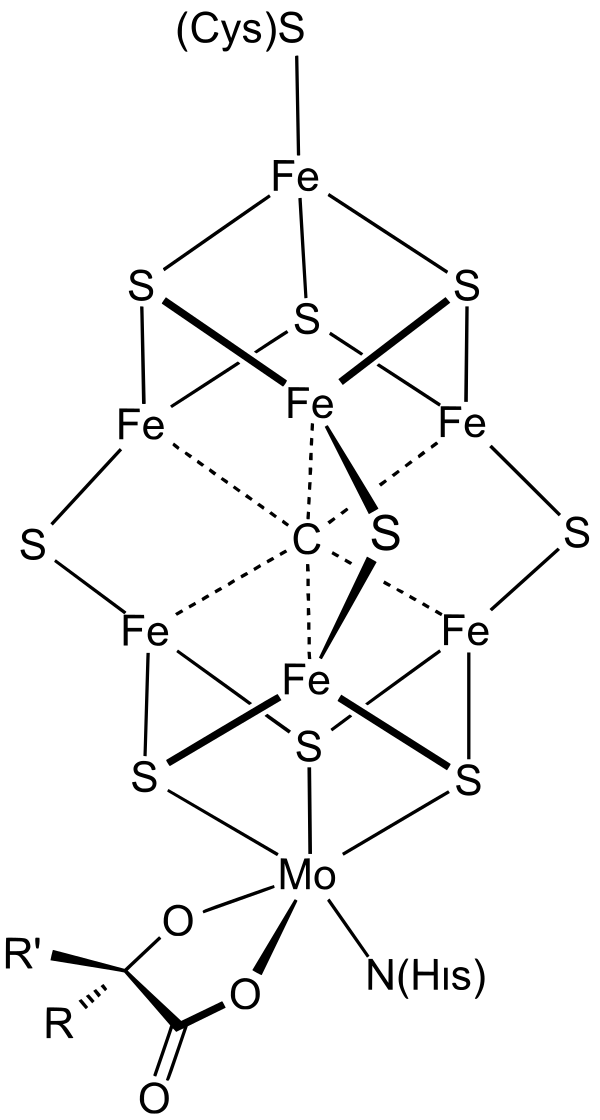

Princeton's tantalum substitution made qubits last dramatically longer — found the old-fashioned way through lab experimentation. A powerful enough quantum computer could find the next wonder material much faster. When the machine you're improving can help design its own improvements, progress starts compounding. But what does "simulate a molecule" actually mean? It means calculating how electrons behave — where they sit, how they interact, how much energy different configurations require. This matters because electron behavior determines everything: whether a drug binds to a protein, whether a material conducts electricity, whether a catalyst speeds up a reaction. Classical computers can approximate this for small molecules, but the cost grows exponentially with size. A quantum computer could model complex molecules like FeMoco[5] — the active site in nitrogen-fixing enzymes, relevant to fertilizer production — directly, because quantum computers naturally speak the same "language" as electrons[6].

Estimated timeline for loop closure: This loop likely cannot close until we have machines with at least 1,000 logical qubits at error rates below 10⁻⁶-plausibly in the late 2020s to early 2030s under the base-case scenario. When it does close, the acceleration could be substantial, potentially compressing what would otherwise be decades of materials science into years.

Feedback Loop 2: Revenue → Investment → Faster Progress

The quantum computing industry has operated primarily on investment capital rather than revenue. This is beginning to change, though cautiously. Quantinuum's Helios launched with enterprise access agreements from Amgen, BMW Group, JPMorgan Chase[4], and SoftBank-though these are primarily exploratory partnerships for research and evaluation, not production deployments generating demonstrated business ROI. D-Wave's quantum annealing systems have been in commercial use for optimization problems, though no enterprise customer has publicly demonstrated that quantum computing delivers a cost-effective advantage over classical alternatives.

Commercial purchases of quantum computing systems and cloud access are estimated at $650–850 million in 2024 (McKinsey estimates quantum computing company revenue at $650–750 million; broader market definitions including government procurement, academic installations, and cloud subscriptions push the figure higher)-not solely enterprise production deployments.

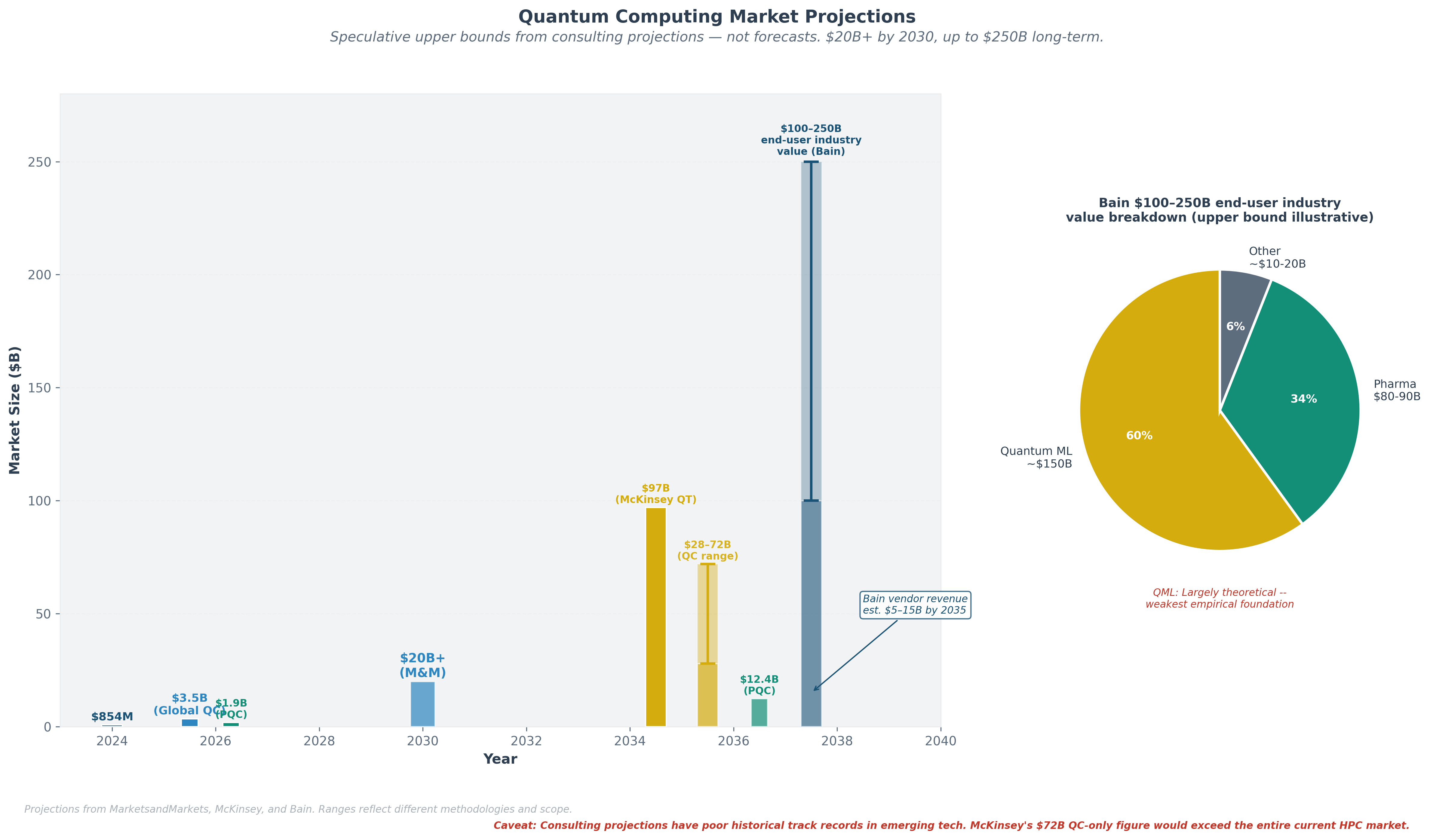

Bain estimates that quantum computing could unlock $100–250 billion[2] in market value across end-user industries—pharmaceuticals ($80-90 billion potential), financial services, logistics, and materials science. This represents economic value created for those industries, not quantum computing vendor revenue, which Bain separately estimates at $5–15 billion by 2035. McKinsey projects quantum technologies could generate up to $97 billion[1] in revenue by 2035, with quantum computing capturing $28–72 billion of that total (a wide range reflecting deep uncertainty). These projections warrant significant skepticism. They are top-down estimates produced by consulting firms, not bottom-up revenue models built from identified customers solving identified problems at identified price points. For context, the upper end of McKinsey's $28–72 billion quantum computing revenue range would exceed the entire current global high-performance computing (HPC) market (~$50-60 billion). Consulting-firm technology projections have a poor historical track record for emerging technologies—quantum computing market estimates have been revised downward repeatedly over the past decade. These figures should be understood as speculative upper bounds, not forecasts.

Even earlier, more modest demonstrations of quantum advantage in chemistry or optimization could generate revenue sufficient to sustain the investment cycle-but the gap between current revenue (~$650–850M, largely exploratory) and these projections is vast.

Right now, quantum companies burn far more cash than they earn. Early "commercial" revenue is mostly exploratory-companies testing the technology, not deploying it for proven business value. The key milestone is when revenue becomes self-sustaining, which is likely several years away.

The venture capital environment reflects this expectation: $3.77 billion in equity funding[2] in the first nine months of 2025, with a decisive shift toward larger, later-stage deals (Series B and beyond now accounting for approximately 63% of quantum venture investment). This is the capital market betting that commercialization is approaching.

Feedback Loop 3: Quantum + AI Convergence

The interaction between quantum computing and artificial intelligence runs in both directions. AI is already being used to optimize quantum hardware: Google uses machine learning and reinforcement learning algorithms for Willow's error correction calibration. Quantinuum is developing "Generative Quantum AI" (GenQAI)-using quantum computers to generate training data that enhances classical AI models.

In the other direction, quantum computers could eventually accelerate certain AI workloads. However, this must be stated with caution: quantum machine learning is, by Bain's assessment, "mostly theoretical" with "key algorithmic and data-loading bottlenecks." It accounts for roughly $150 billion of Bain's projected $100–250 billion in end-user industry value but has the weakest empirical foundation. Research on "barren plateaus"—a phenomenon where the mathematical landscape guiding a quantum algorithm becomes so flat and featureless that the algorithm cannot find its way to a solution (see Section 3.3)—has identified a potentially fundamental barrier[3] for variational quantum algorithms (discussed in detail in Section 3.3). The implication is stark: over half of Bain's projected value rests on a category of algorithms that may face fundamental scalability barriers regardless of hardware quality.

AI is already helping build better quantum computers. The reverse—quantum computers making AI better—is the bigger prize but remains unproven. NVIDIA is betting on the convergence, backing Quantinuum, PsiQuantum, and QuEra in a single week in September 2025.

NVIDIA's Jensen Huang, once bearish on near-term quantum computing, reversed course in 2025, stating that classical supercomputer and quantum computer hybrids "could be the future" and that it is "essential for us to connect a quantum computer directly to a GPU supercomputer." NVIDIA's investment in Quantinuum, PsiQuantum, and QuEra in September 2025 put capital behind that conviction.

Speculative upper bounds from consulting projections. The top of McKinsey's $28–72B QC range would exceed the entire current HPC market. Bain's $100–250B represents end-user industry value, not vendor revenue.

Notes

- McKinsey & Company, Quantum Technology Monitor 2025. ↩

- Bain & Company, Technology Report 2025: quantum computing market analysis. ↩

- Cerezo, M. et al., 'Cost function dependent barren plateaus in shallow parametrized quantum circuits,' Nature Communications 12, 1791 (2021). ↩

- JPMorgan Chase, quantum computing research initiatives and enterprise partnerships (2025). ↩

- Reiher, M. et al., 'Elucidating Reaction Mechanisms on Quantum Computers,' PNAS 114(29), 7555–7560 (2017). Estimated ~100–200 logical qubits needed to represent the active space of FeMoco (the iron-molybdenum cofactor in nitrogenase), one of the most-cited benchmarks in quantum chemistry resource estimation. Full error-corrected implementations would require millions of physical qubits. ↩

- Feynman, R., 'Simulating Physics with Computers,' International Journal of Theoretical Physics 21, 467–488 (1982). Feynman's original argument that quantum systems require quantum computers to simulate efficiently. ↩