Chapter III: The Challenges

Before examining quantum computing's strategic challenges—capital requirements, security implications, the algorithm gap, and geopolitics—we must first confront the technical walls that could stall hardware progress regardless of funding or strategy.

Quantum's Binding Constraints: The Walls

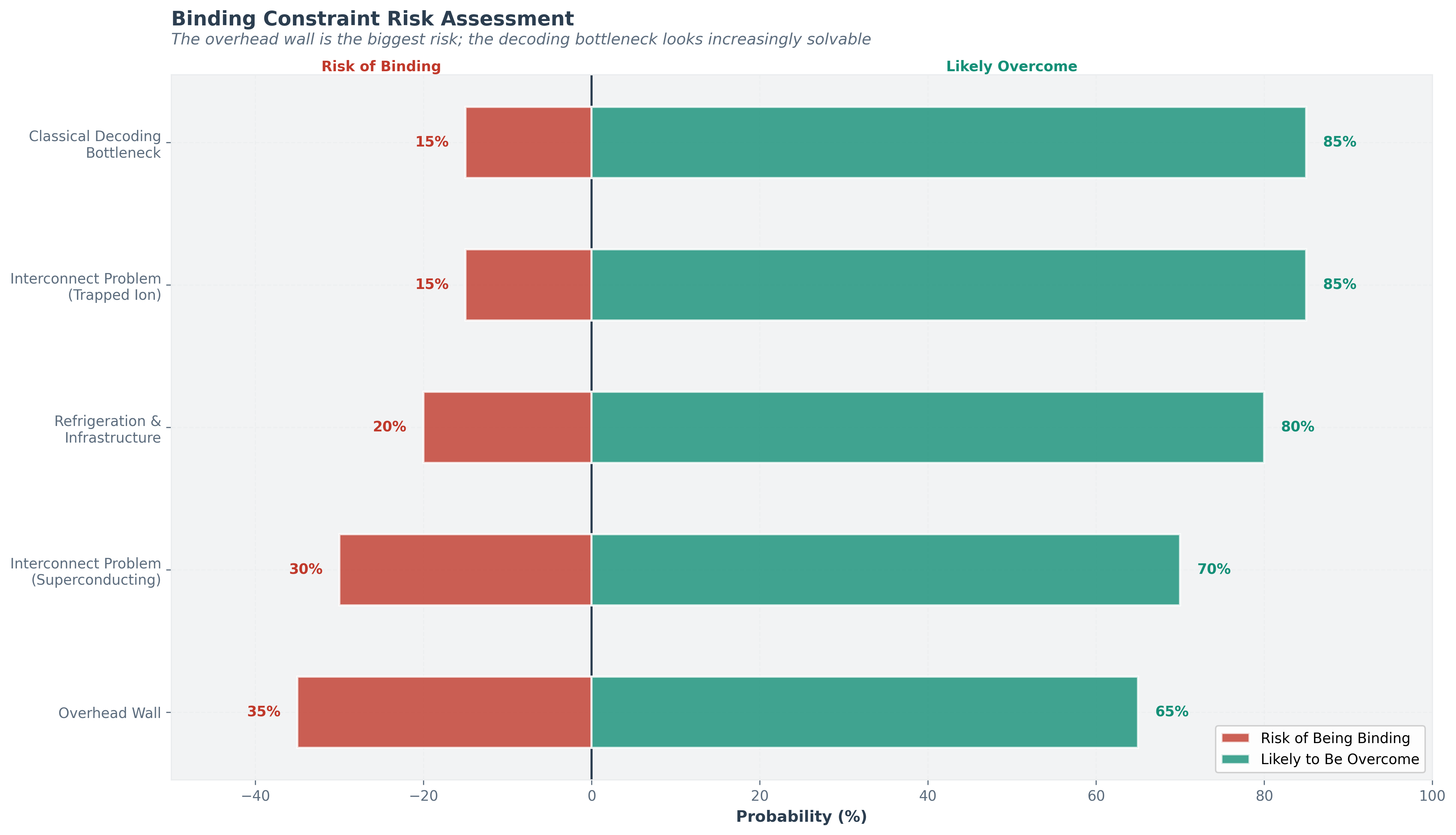

Every technology hits binding constraints that can stall progress. Quantum computing has its own walls. Understanding them is essential for calibrated forecasting.

Every technology hits bottlenecks that could stall progress. Quantum computing has four. This section describes each one honestly: what it is, why it might be solvable, and the risk that it isn't.

Wall 1: The Overhead Wall

The single biggest barrier to practical quantum computing. For superconducting systems using surface codes, the physical-to-logical qubit ratio is approximately 1,000:1 for high-fidelity computation. This means a commercially useful 1,000-logical-qubit machine would require roughly one million physical qubits-vastly beyond anything built or planned for the near term.

Why insiders are bullish it can be broken: Three developments are converging. First, Quantinuum has demonstrated a 2:1 encoding ratio in trapped ions, proving that much more efficient codes exist and work. Second, IBM's qLDPC codes promise dramatically better encoding rates for superconducting systems, and the Loon processor demonstrates the hardware needed to implement them. Third, QuEra's algorithmic fault-tolerance techniques claim up to 100× overhead reduction. If any of these approaches scales, the overhead wall breaks.

Historical analogy: In classical computing, the challenge of reliable transistor scaling was met not by making individual transistors perfect, but by developing error-correcting codes (ECC memory, parity bits) and redundant architectures. The quantum field is following an analogous path, but the physics is harder.

Calibrated confidence: The overhead wall is being actively undermined from multiple directions simultaneously. The most likely outcome is that overhead ratios decline to 10:1-100:1 within this decade through a combination of improved fidelities and better codes, making machines of hundreds of logical qubits feasible. Achieving the 1,000:1 ratio assumed in worst-case analyses is increasingly unlikely. Probability that the wall is substantially broken by 2030: ~60-70%. (These are the author's subjective assessments based on the trendline data surveyed for this report, not calibrated forecasts.)

Wall 2: The Classical Decoding Bottleneck

Error correction requires a fast classical computer to decode error syndromes in real time. If the decoder cannot keep up with the quantum processor's clock speed, errors accumulate faster than they can be corrected. This is particularly challenging for superconducting systems, where gate times are nanoseconds.

While the quantum computer runs, a regular computer has to watch for errors and fix them in real time. If this "umpire" is too slow to call the plays, the game falls apart. Recent breakthroughs have made the umpire dramatically faster, and this wall is looking increasingly conquerable.

Current state: IBM achieved real-time qLDPC decoding[8] in under 480 nanoseconds using an AMD FPGA-a 10× speedup over previous approaches, delivered a year ahead of schedule. Quantinuum integrates NVIDIA Grace Hopper GPUs into the Helios control engine for real-time error decoding, achieving a 3%+ improvement in logical fidelity.

Calibrated confidence: This wall is likely to be overcome. Classical computing power continues to improve, and dedicated decoder ASICs are a natural next step. The decoder problem is a well-defined engineering challenge, not a fundamental physics barrier. Probability of this being a binding constraint by 2030: ~15%.

Wall 3: The Interconnect Problem

Scaling beyond a single quantum chip requires quantum interconnects-the ability to entangle qubits on separate chips and maintain coherence across the connection. This is analogous to the classical networking problem but exponentially harder because quantum information cannot be copied (the no-cloning theorem) and must be transmitted without measurement.

Today's quantum computers are standalone-each chip works in isolation. To build bigger machines, we need to connect chips while preserving quantum properties. It's like moving a soap bubble between rooms without popping it-extraordinarily hard because quantum data cannot be copied for backup.

IBM's roadmap addresses this with modular architectures: Kookaburra (2026) is designed as the first modular quantum processor for logical qubit storage, and Cockatoo (2027) aims to demonstrate entanglement between separate processors. Quantinuum's QCCD architecture approaches the problem differently, scaling through junctions within a single trap rather than interconnecting separate chips.

Calibrated confidence: Quantum interconnects are an open engineering challenge. Demonstration at production scale is likely three to five years away. This is more likely to slow progress than stop it. Probability of this being a binding constraint: ~30% for superconducting architectures, ~15% for trapped ions (which scale differently).

Wall 4: The Refrigeration and Infrastructure Wall

Superconducting qubits require millikelvin temperatures. Current dilution refrigerators can cool systems of hundreds of qubits. Scaling to millions of qubits would require either vastly larger cryogenic systems or modular approaches with quantum interconnects between refrigerators. Helium-3, the primary coolant for dilution refrigerators, is scarce and geopolitically concentrated.

Some quantum computers need to be colder than outer space, requiring specialized refrigerators that are expensive, complex, and rely on a rare gas. Scaling up means building refrigerators far larger than anything attempted before, or networking many smaller ones together. Other approaches avoid the extreme cold but face their own infrastructure challenges.

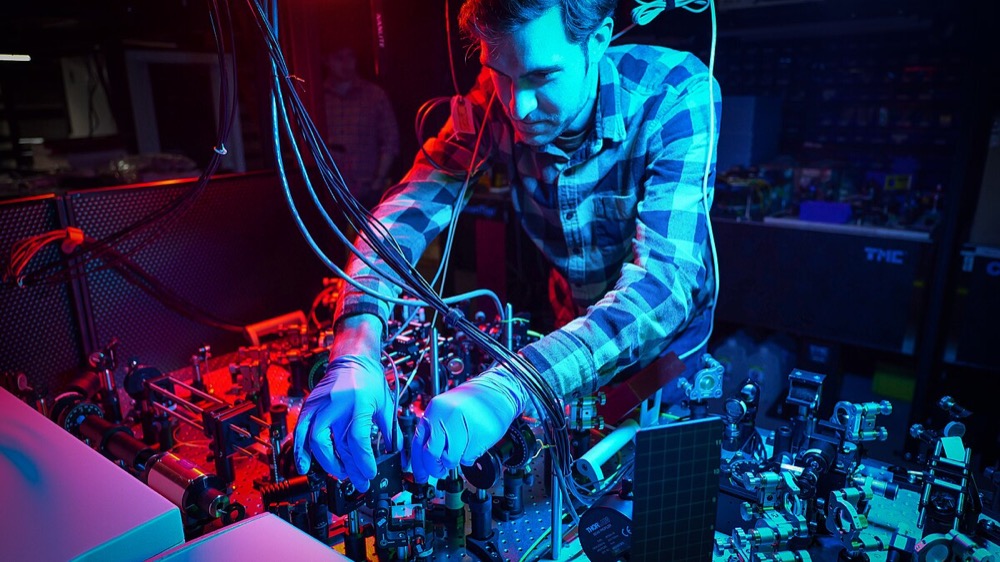

Trapped ions, neutral atoms, and photonic systems have different but non-trivial infrastructure requirements: ultra-high vacuum, precision laser systems, and specialized optics, respectively. A single Quantinuum Helios system involves a room full of lasers, mirrors, optical fiber, and vacuum equipment.

Calibrated confidence: Infrastructure is a practical bottleneck that will slow deployment but not prevent it. The analogy to early mainframe computing is apt: early quantum computers, like early classical computers, will be large, expensive, room-sized installations accessible primarily via cloud. Probability of this being the binding constraint: ~20%.

The overhead wall is the biggest risk; the decoding bottleneck looks increasingly solvable.

3.1 The Race to the Quantum Data Center: Capital and Infrastructure

The capital dynamics of quantum computing differ from AI in important ways. AI’s scaling thesis requires trillions of dollars in electricity and GPU infrastructure. Quantum computing’s capital requirements are currently orders of magnitude smaller—billions, not trillions—but the per-machine cost is extremely high and scaling is harder. Unlike AI data centers, which are essentially warehouses full of GPUs with standardized power and cooling, quantum computing facilities require bespoke infrastructure: dilution refrigerators for superconducting systems, ultra-high vacuum and precision laser arrays for trapped ions, cleanroom fabrication for chip production. These are not off-the-shelf components. The supply chain for quantum-specific hardware—cryogenic electronics, specialized optical components, high-purity materials—is thin and fragile.

Building an AI data center is expensive but straightforward: buy hardware, plug it in, and go. Building a quantum computing facility is more like building a particle physics experiment—every component is custom, the supply chains are tiny, and the engineering is bespoke.

Bottleneck resources:

- Helium-3, essential for dilution refrigerators, is scarce globally and primarily sourced as a byproduct of nuclear weapons maintenance—making it geopolitically sensitive.

- Skilled quantum engineers are in extreme short supply, estimated at only a few thousand globally with the required expertise in quantum hardware.

- Specialized fab capacity for quantum-specific chip designs is limited to a handful of facilities worldwide.

IBM's shift to 300mm wafer fabrication at the Albany NanoTech Complex represents an important strategic move: by using industrial-grade semiconductor tooling, the company has doubled its R&D cycle speed and achieved a ten-fold increase in the physical complexity of its quantum chips. This kind of manufacturing infrastructure investment will be essential as quantum systems scale.

3.2 The Cryptographic Threat: Security Implications

This is the section most relevant to policymakers. It requires precision and the absence of hype.

The threat: A sufficiently powerful quantum computer running Shor's algorithm could break RSA and elliptic curve cryptography (ECC), which underpins virtually all internet security: banking, communications, government systems, military command and control.

Almost everything you do online is protected by encryption that relies on certain math problems being impossibly hard for regular computers. A powerful enough quantum computer could solve those problems, effectively picking every digital lock on the internet. This isn't happening soon, but it's not science fiction either.

How far away? Current estimates suggest that breaking RSA-2048 requires approximately 6,000+ logical qubits[1] operating at logical error rates of ~10⁻¹⁵ (achieved through error correction, not raw hardware fidelity), or equivalently roughly 20 million physical qubits at physical error rates of ~10⁻³ for current superconducting architectures using surface codes. We are at 48 error-corrected logical qubits today (Quantinuum Helios). A cryptographically relevant quantum computer is not imminent. The most credible estimates place it at 15 to 25+ years away based on current hardware trajectories, assuming continued steady progress.

The "harvest now, decrypt later" threat: This is the real urgency. Adversaries can intercept and store encrypted data today, waiting to decrypt it when quantum computers become powerful enough. For data that must remain secret for decades —national security secrets, medical records, financial data, diplomatic communications—the threat timeline is effectively today. Every day of encrypted data captured before post-quantum cryptography migration is a day of future vulnerability.

The scenario that keeps intelligence agencies up at night: an adversary records your encrypted communications today—they can't read them yet. Years later, a quantum computer breaks the encryption and they decrypt everything they've been hoarding. For secrets that must stay secret for decades, the threat is now, even though the quantum computer won't exist for years.

Post-quantum cryptography (PQC): NIST finalized PQC standards in 2024[2], establishing algorithms—ML-KEM (key exchange), ML-DSA (digital signatures), and SLH-DSA (hash-based signatures)—designed to resist both classical and quantum attacks. The PQC market is valued at approximately $1.9 billion in 2025, projected to reach $12.4 billion by 2035. Migration to PQC is underway but painfully slow—most enterprises have not yet begun.

The critical question: Is the PQC migration happening fast enough relative to quantum hardware progress? The honest answer: probably, but with insufficient margin. If quantum hardware progress follows the base-case scenario, there is ample time for migration. If a breakthrough accelerates the timeline (Scenario B), organizations that have not migrated by the early 2030s could be vulnerable. The prudent course—and the one recommended by NIST, NSA, and CISA—is to begin migration immediately.

3.3 The Algorithm Gap: Software Maturity

Even if we had a perfect 1,000-logical-qubit machine tomorrow, the honest truth is that we have very few algorithms that could immediately exploit it for problems that matter to the economy.

The awkward secret: hardware is advancing faster than software. Even a perfect quantum computer would have a limited menu of solvable problems today. It's like building the world's fastest race car but only having two racetracks—the tracks are behind schedule.

Where algorithms are most mature: Quantum simulation—modeling the behavior of molecules, materials, and quantum systems—is closest to demonstrating practical advantage. Quantinuum has used Helios to simulate high-temperature superconductivity and magnetism. The IonQ/Ansys collaboration reported a 12% speedup on a single medical device simulation instance[4], but this result has not been independently replicated, the classical baseline has not been independently verified, and a 12% margin on one problem does not yet constitute robust practical advantage. Shor's algorithm for factoring is well-understood but requires massive scale (thousands of logical qubits at extremely low error rates).

Where algorithms are least mature: Quantum machine learning, despite accounting for the largest projected market value (~$150 billion of the upper end of Bain's $100–250 billion estimate of end-user industry value—i.e., economic value created for industries like pharma and finance that use quantum computing, not revenue earned by quantum computing vendors, which Bain estimates at a far smaller $5–15 billion), remains largely theoretical with key algorithmic and data-loading bottlenecks. The most significant concern is a phenomenon called "barren plateaus," which may impose a fundamental mathematical limit—not merely an engineering hurdle—on the most widely studied class of near-term quantum algorithms. Specifically, research by Cerezo et al. (2021[6]; see also McClean et al. 2018[7]) has shown that variational quantum algorithms face exponentially vanishing gradients as system size increases—a structural mathematical limitation, not merely an engineering challenge that better hardware will solve. Quantum optimization has shown promise via quantum annealing (D-Wave) but is continuously challenged by improving classical heuristics.

Imagine trying to find the lowest point in a vast, flat desert where your compass barely works. A "barren plateau" is when the mathematical landscape that a quantum algorithm must navigate becomes so featureless that the algorithm can't tell which direction leads to a better answer. As the problem grows, the landscape gets flatter — exponentially so. This isn't a hardware problem that better qubits can fix; it's a mathematical property of certain algorithm designs themselves. It's why the most hyped category of near-term quantum algorithms — the ones behind the largest market projections — may face fundamental limits.

Classical competition

This is the uncomfortable backdrop for every quantum advantage claim—and it deserves more than a backdrop role in the analysis. Classical algorithms and hardware are not standing still; they are experiencing their own revolution driven by AI accelerators, GPU computing, and algorithmic advances.

The erosion of quantum advantage claims has been systematic and rapid. Google's 2019 Sycamore "quantum supremacy" claim—that its 53-qubit processor performed a computation in 200 seconds that would take a classical supercomputer 10,000 years—was challenged repeatedly: IBM argued the classical simulation could be done in 2.5 days with sufficient disk storage, and by 2022, Pan et al. demonstrated that tensor network methods[3] on a cluster of 512 classical GPUs could perform the same computation in approximately 15 hours—and estimated that an exascale supercomputer could do it in seconds.

The only remaining robust quantum computational advantage—random circuit sampling (RCS)—has no practical application.

The "dequantization" literature, beginning with Ewin Tang's 2019 breakthrough showing that quantum-inspired classical algorithms could match proposed quantum speedups for recommendation systems, has continued to narrow the theoretical advantage window. Multiple proposed quantum speedups for linear algebra, machine learning, and optimization problems have been partially or fully matched by classical approaches.

Classical computing is not merely maintaining pace—it is accelerating. NVIDIA's data center revenue has grown from ~$15 billion in fiscal year 2023 (ending January 2023) to over $115 billion in fiscal year 2025 (ending January 2025), driven by AI demand that is simultaneously pushing GPU, TPU, and specialized accelerator performance forward at rates that quantum computing must outpace to deliver commercial value.

Tensor network methods, variational classical algorithms, and AI-driven simulation techniques represent a moving target that quantum computers must clear—not a static benchmark.

The symmetric risk this creates is underappreciated: every year that fault-tolerant quantum computers are delayed, classical computing has an additional year to push the advantage threshold further out. The honest quantum analyst must always ask: "Can a classical computer do this better?" Today, the answer is still "yes" for nearly all practical problems. The question is whether quantum hardware can scale fast enough to outrun classical improvements—and that race is far from decided.

3.4 The Geopolitical Dimension: Who Wins the Quantum Race?

United States: Dominant in private-sector diversity (Google, IBM, Microsoft, Quantinuum, IonQ, Rigetti, PsiQuantum, QuEra, Atom Computing, D-Wave) and venture capital funding ($1.7 billion of $2.6 billion global VC in 2024). Home to DARPA QBI, CHIPS and Science Act quantum funding, NSF quantum information science centers, and major national laboratories. JPMorgan recently announced plans[5] to invest up to $10 billion across strategic technology sectors including quantum computing.

China: Estimated $15 billion+ in government investment (unconfirmed officially; estimates range from $4–17 billion in actual expenditure). USTC (Hefei) is world-class, having demonstrated quantum advantage claims with Jiuzhang (photonic) and Zuchongzhi (superconducting). Strong in quantum communications, operating a QKD satellite network. In March 2025, China announced a national venture capital guidance fund mobilizing 1 trillion yuan ($138 billion)—but this covers all strategic emerging technologies (AI, semiconductors, biotech, 6G, quantum, and others) over a 20-year horizon, with only ~$14.2 billion in actual government seed capital and the remainder to be leveraged from local governments and private sources. No quantum-specific allocation has been disclosed. Less visibility into private-sector activity and limited collaboration with Western research community.

Europe: EU Quantum Flagship (>€1 billion). Strong academic base and growing startup ecosystem: IQM (Finland), Pasqal (France), Alice & Bob (France), Quantum Motion (UK). Multiple DARPA QBI Stage B companies are non-US-headquartered (Nord Quantique and Xanadu in Canada, Quantum Motion in the UK). Concern about “brain drain” to the US remains persistent.

Other notable programs:

- Japan ($7.4 billion announced in 2025)

- Australia (PsiQuantum government partnership, Silicon Quantum Computing, Diraq)

- UK (National Quantum Strategy, Quantinuum HQ)

- Singapore ($222 million), South Korea, Israel

The U.S. has the most companies and private investment. China has the biggest government program. Europe has strong research but struggles to commercialize it. The key risk: quantum technology could become another front in great-power competition, with export controls limiting progress on both sides.

Key asymmetry: The US leads in private-sector diversity, VC funding, and the number of distinct hardware approaches being pursued commercially. China leads in government-directed scale and quantum communications. Europe leads in academic research but lags in commercialization. The risk of technology decoupling—restricted access to quantum hardware, talent, or materials across geopolitical blocs—is real and growing.

Notes

- Gidney, C. & Ekerå, M., 'How to factor 2048 bit RSA integers in 8 hours using 20 million noisy qubits,' Quantum 5, 433 (2021). ↩

- NIST, Post-Quantum Cryptography Standards: ML-KEM, ML-DSA, SLH-DSA (finalized 2024). ↩

- Pan, F. et al., 'Solving the sampling problem of the Sycamore quantum circuits,' Physical Review Letters 129, 090502 (2022). ↩

- IonQ/Ansys, reported 12% speedup on medical device simulation using 36-qubit Forte Enterprise system (March 2025). ↩

- JPMorgan Chase, quantum computing research initiatives and enterprise partnerships (2025). ↩

- Cerezo, M. et al., 'Cost function dependent barren plateaus in shallow parametrized quantum circuits,' Nature Communications 12, 1791 (2021). Key result: the variance of cost function gradients decreases exponentially with qubit count for typical parametrized circuits, making optimization intractable at scale. ↩

- McClean, J. R. et al., 'Barren plateaus in quantum neural network training landscapes,' Nature Communications 9, 4812 (2018). The foundational paper identifying exponentially vanishing gradients as a property of random parametrized quantum circuits, independent of hardware noise. ↩

- IBM, Quantum Developer Conference announcements: Nighthawk, Loon, and real-time qLDPC decoding (November 2025). ↩